Google is splitting its next wave of AI silicon into two lanes: TPU 8t for heavy model training and TPU 8i for fast inference. The pitch is straightforward enough: as AI agents move from chatbot demos to multi-step workflows, one chip design no longer fits every job.

That matters because the bottleneck in AI is shifting. Training still needs brute force, but serving increasingly busy agent systems now demands low latency, higher memory bandwidth, and fewer wasted cycles. Google is betting that specialization, not a one-size-fits-all accelerator, is how it keeps pace with rivals pushing their own custom silicon and hyperscaler cloud stacks.

TPU 8t is built for massive training runs

TPU 8t is the compute monster in the pair. Google says it can cut frontier model development from months to weeks, and that a single superpod scales to 9,600 chips with two petabytes of shared high-bandwidth memory. The company also says it delivers 121 exaFLOPS of compute and nearly 3x the compute performance per pod versus the previous generation.

There is a practical reason for the ambition. Large-model training has become an infrastructure arms race, and every gain in throughput or ”goodput” can save real time on giant jobs that would otherwise stall on failures, network issues, or checkpoint restarts. Google says TPU 8t is engineered for over 97% goodput, with rerouting and optical circuit switching designed to keep training moving.

- TPU 8t superpod scale: 9,600 chips

- Shared memory: two petabytes

- Compute: 121 exaFLOPS

- Performance claim: nearly 3x compute performance per pod

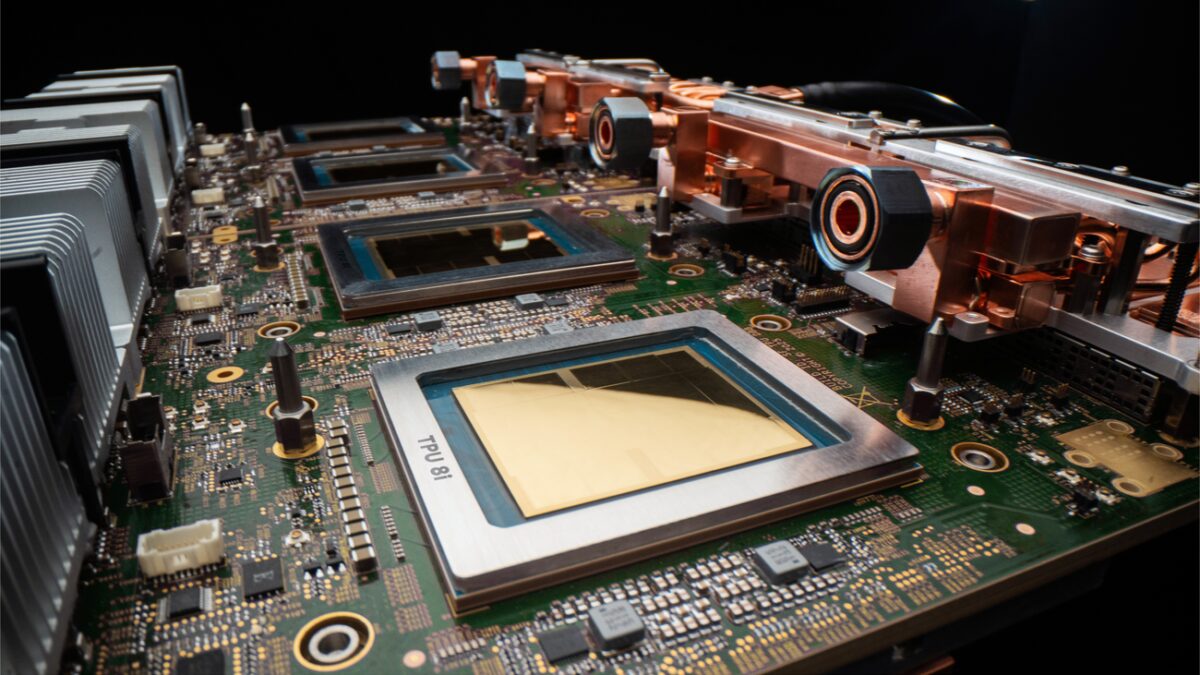

TPU 8i targets low-latency inference

TPU 8i is the server-side workhorse for inference, and Google is clearly aiming at the messy reality of agentic AI, where one request can trigger a chain of model calls, tools, and follow-up reasoning. The chip pairs 288 GB of high-bandwidth memory with 384 MB of on-chip SRAM, doubles the physical CPU hosts per server using Axion Arm-based CPUs, and doubles ICI bandwidth to 19.2 Tb/s.

Google says those changes deliver 80% better performance-per-dollar than the previous generation and can nearly double customer volume at the same cost. That is a familiar cloud pitch, but it is also the one customers care about most: inference bills, not benchmark slides, tend to decide whether an AI product survives contact with reality.

- Memory: 288 GB high-bandwidth memory

- On-chip SRAM: 384 MB

- ICI bandwidth: 19.2 Tb/s

- Performance claim: 80% better performance-per-dollar

Google is pushing the full-stack argument again

What Google is really selling here is not just chips, but control. TPU 8t and TPU 8i both run on Axion ARM-based CPU hosts, support native JAX, MaxText, PyTorch, SGLang, and vLLM, and offer bare metal access for customers who want to get closer to the metal without virtualization overhead. That is classic hyperscaler leverage: if you own the silicon, the network, the cooling, and the software stack, you can tune the whole system around one workload instead of hoping generic hardware behaves nicely.

The timing also fits the market. Competitors are racing to lock in their own AI infrastructure ecosystems, while the biggest cloud buyers are under pressure to squeeze more output from constrained power budgets. Google says TPU 8t and TPU 8i deliver up to two times better performance-per-watt than Ironwood, and that its data centers now deliver six times more computing power per unit of electricity than five years ago.

TPU 8 availability later this year

Both chips will be generally available later this year, and customers can request more information now. That leaves Google with a straightforward task: prove that its split design really does make AI agents faster, cheaper, and easier to scale than the brute-force alternatives. If it works, TPU 8t and TPU 8i could make the case that the agentic era is less about bigger models and more about better plumbing.