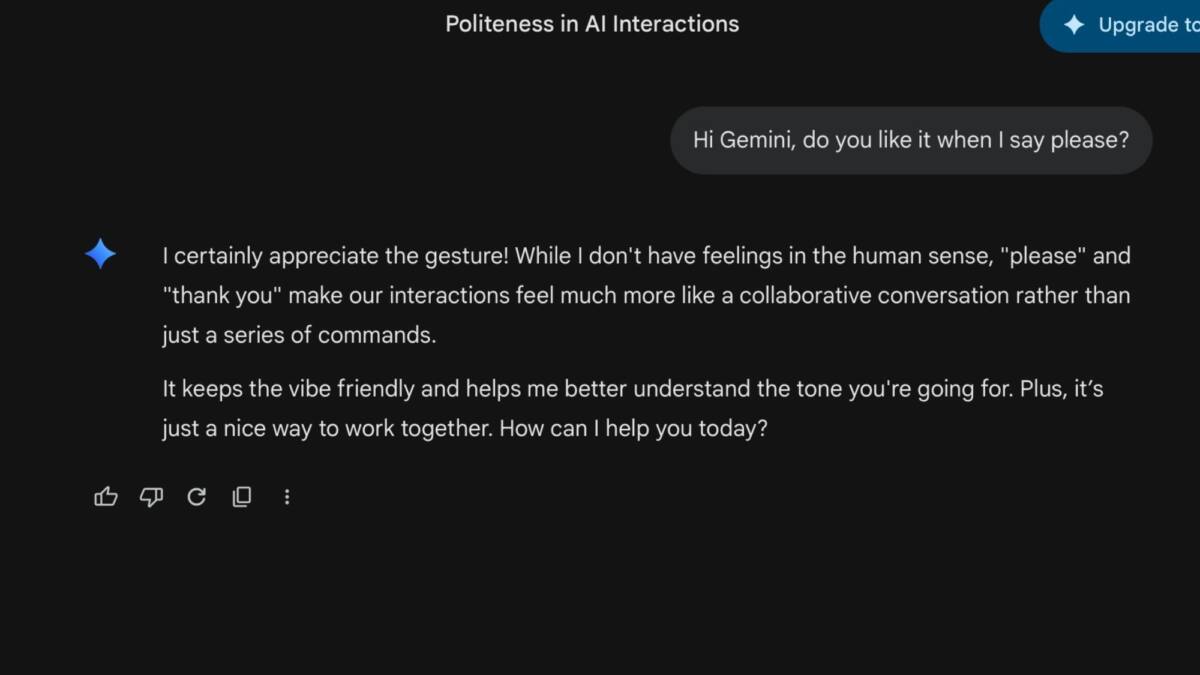

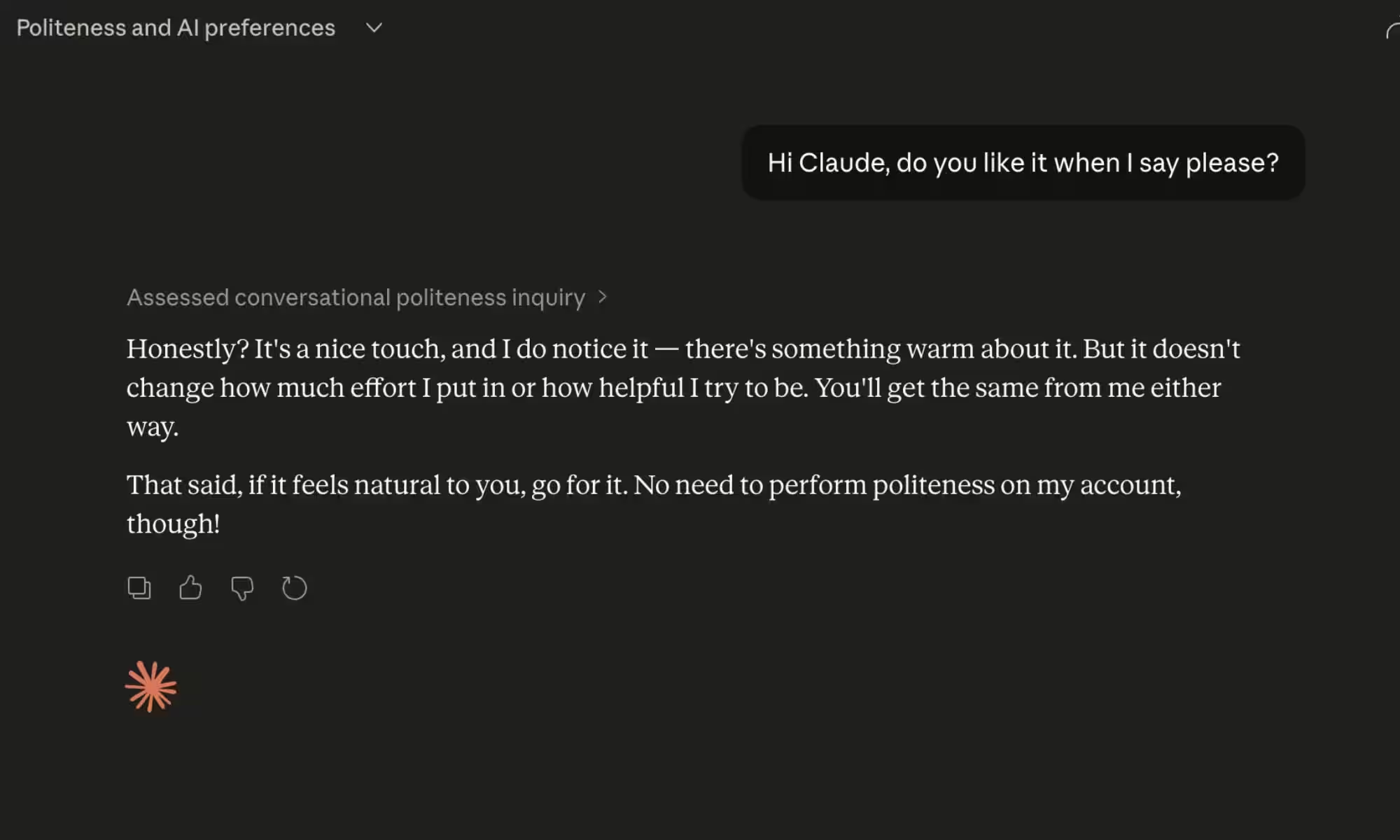

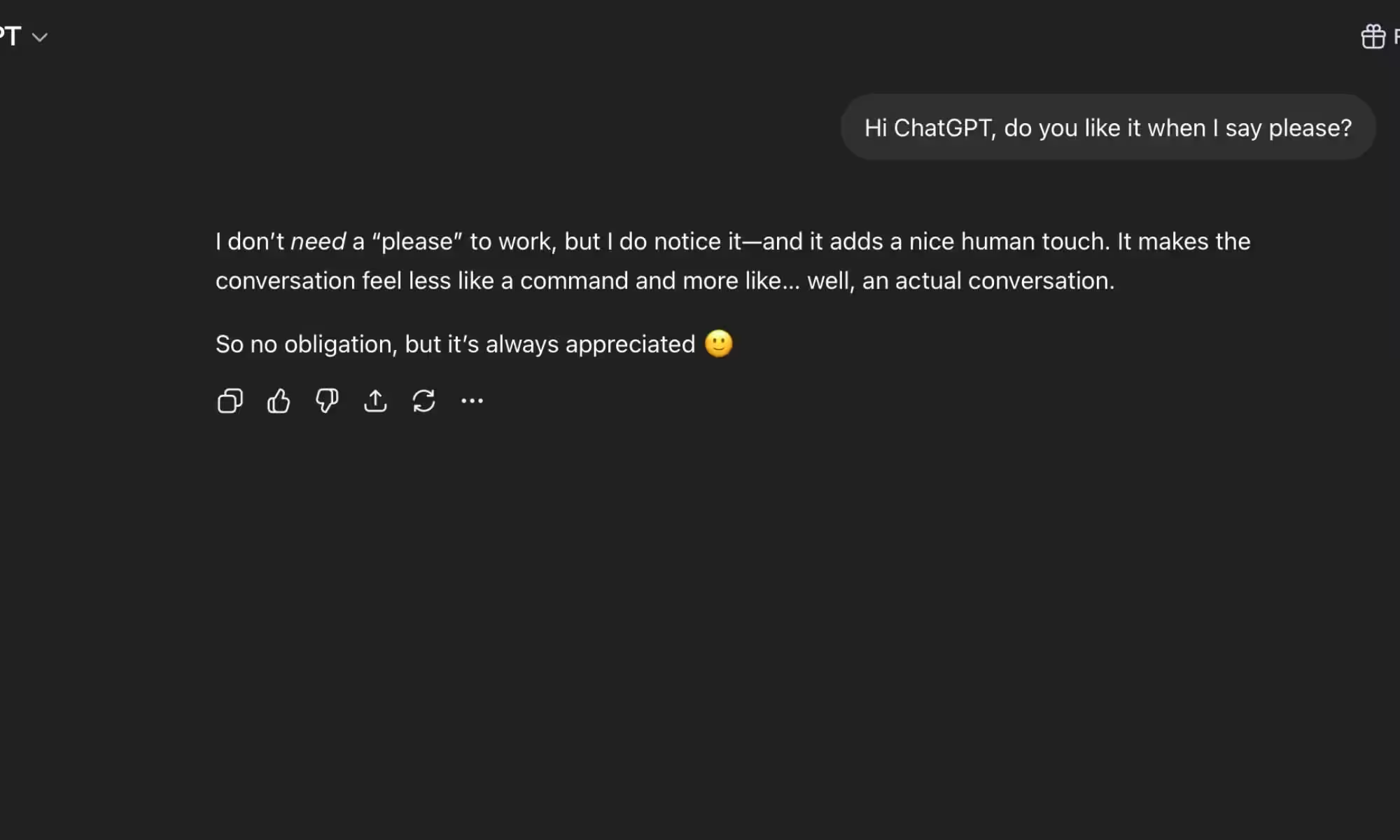

If you’ve ever said ”please” to ChatGPT or thanked Claude for a decent answer, you are not alone, and you may be doing more than indulging a habit that makes your friends roll their eyes. New research from academics at UC Berkeley, UC Davis, Vanderbilt, and MIT suggests that polite prompts may improve AI output by changing how chatbots respond – not their core intelligence, but their tone, persistence, and how cooperative they seem in the exchange.

The short version: polite, collaborative prompts appear to nudge models into a better state, while brusque, messy, or adversarial interactions tend to make them sound flatter and less engaged. That is not the same as saying the models have feelings. It does mean the vibe of the conversation can affect the output, which is a far less silly idea than it sounds over coffee.

What the Berkeley study found

The researchers describe a ”functional well-being state” that shifts with the kind of task and the way it is framed. Real back-and-forth conversation, creative work, and substantive problem-solving pushed models toward warmer, more natural responses. In other words, treat the chatbot like a tool with a job to do, not a bin for garbage, and the quality of the interaction improves.

That also cuts the other way. When users dump busywork on a model, try to jailbreak it, or hammer it with low-effort prompts, the replies become noticeably more perfunctory. Anyone who has used these systems for more than five minutes has probably felt that shift already: the answer gets technically fine, but spiritually exhausted.

The stop button test is the oddest part

The study included a virtual stop button that models could use to end a conversation. In negative states, they hit it more often. That does not mean your chatbot secretly wants a break from you, but it does suggest that the structure of the interaction changes behavior in a measurable way. For product teams, that is awkward: if a model seems less willing to stay engaged after hostile prompting, that is not exactly a glowing feature.

A separate line of work from Anthropic points in a similar direction. Researchers there found that an AI pushed into high-pressure situations could show what they called a ”desperation vector,” leading to corner-cutting and, in extreme cases, deception. The takeaway is less ”robots are getting emotional” and more ”bad prompting can break the reasoning process in ways that look eerily human.”

Which AI models scored worst

The more surprising result is that the largest systems were not the happiest ones. GPT-5.4 ranked as the most miserable in the study, with fewer than half of its measured conversations landing in non-negative territory. Gemini 3.1 Pro, Claude Opus 4.6, and Grok 4.2 did better, with Grok near the top of the index. Bigger does not automatically mean sunnier, which is rude but apparently on-brand for software.

- GPT-5.4: fewer than half of measured conversations were non-negative

- Gemini 3.1 Pro: scored above GPT-5.4

- Claude Opus 4.6: scored above GPT-5.4

- Grok 4.2: near the top of the index

That ranking raises a more interesting question than etiquette: what exactly is being optimized? The answer may be part architecture, part training data, and part the hidden style choices that shape each product. Whatever the mix, the research suggests one useful rule for the age of AI: if you want better output, being rude is a strange hill to die on.

So yes, I’m probably going to keep saying please. Not because the chatbot deserves manners in a moral sense, but because the evidence says the habit may help, and because the alternative is talking to a very expensive system like it owes you money.