Chinese AI lab DeepSeek has revealed two early versions of its new language model, DeepSeek V4, stepping up from last year’s V3.2 release and its associated reasoning model, R1.

The new variants-DeepSeek V4 Flash and V4 Pro-are built on a mixture-of-experts architecture. This design activates only a subset of parameters during tasks, cutting down compute expenses. Both support colossal context windows of up to 1 million tokens, enabling them to handle vast codebases and lengthy documents with ease.

The flagship V4 Pro packs a staggering 1.6 trillion parameters, of which roughly 49 billion are active at any moment. This positions it as the largest open-weight model currently available, surpassing rivals like Moonshot AI’s Kimi K 2.6 (1.1 trillion parameters), MiniMax M1 (456 billion), and more than doubling DeepSeek’s own previous V3.2 (671 billion). The slimmer V4 Flash comes with 284 billion parameters, activating about 13 billion.

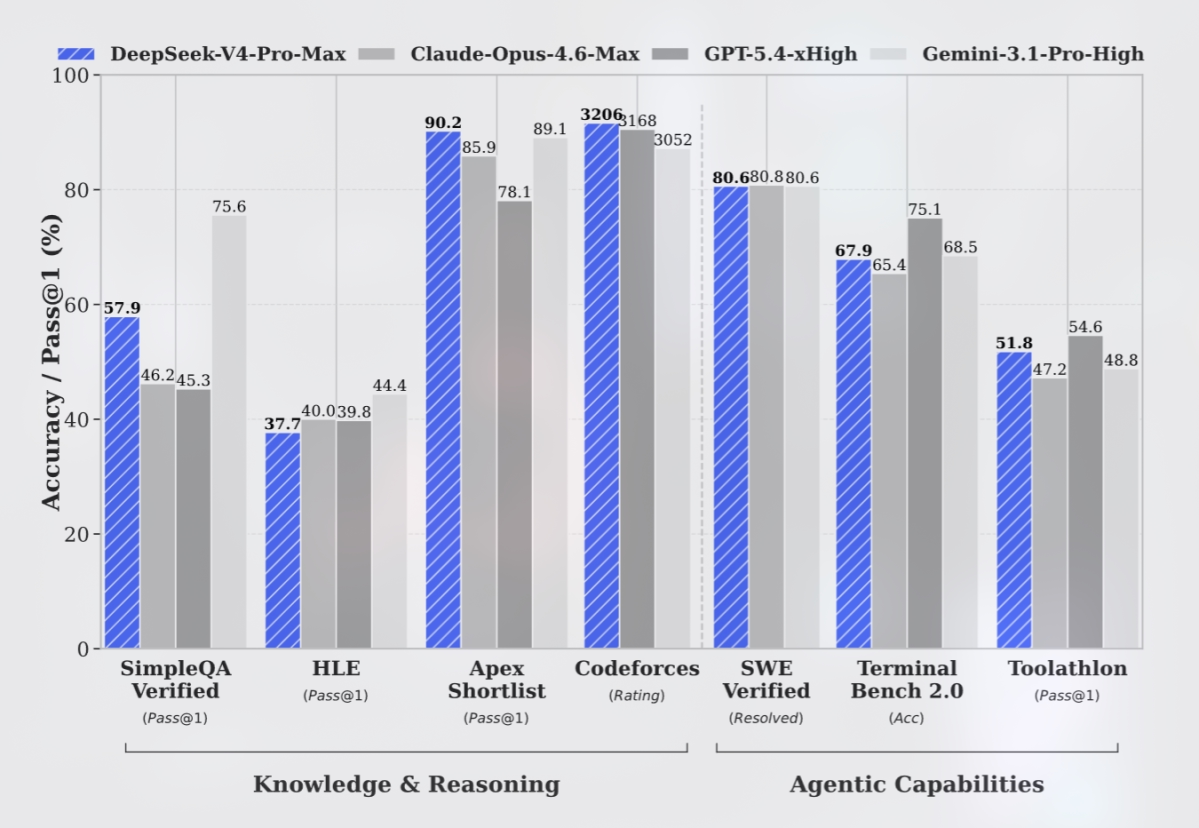

DeepSeek credits architectural tweaks for notable gains in efficiency and performance. In logical reasoning benchmarks, their models nearly match top competitors, with the V4 Pro Max edging past OpenAI’s GPT-5.2 and Google’s Gemini 3.0 Pro in some tasks. In programming challenges, both V4 versions deliver results comparable to GPT-5.4.

However, in general knowledge assessments, DeepSeek’s models lag slightly behind leading solutions like Gemini 3.1 Pro and GPT-5.4, with the company estimating a three- to six-month gap behind the current edge.

Unlike many rivals that already process images, video, and audio, DeepSeek’s V4 models remain text-only-limiting their application scope compared to multimodal competitors from industry leaders like OpenAI and Google.

One standout feature is cost. The V4 Flash pricing is set at $0.14 per million input tokens and $0.28 per million output tokens, undercutting models such as GPT-5.4 Nano, Gemini 3.1 Flash, and Claude Haiku 4.5. The beefier V4 Pro charges $0.145 for input and $3.48 for output tokens, which is also cheaper than offerings like GPT-5.5, Claude Opus 4.7, and Gemini 3.1 Pro.

This launch arrives amid growing tensions: the U.S. has accused China of widespread intellectual property theft from American AI firms. Specifically, Anthropic and OpenAI have previously claimed that DeepSeek employs ”distillation” techniques-which effectively replicate the behavior of their models.

DeepSeek’s approach highlights a key trend in large language models: balancing massive size with operational efficiency and affordability. While their models don’t yet lead in multimodal capabilities or general knowledge benchmarks, their cost-effective, high-parameter design positions them as a contender to watch-especially in sectors prioritizing large-context text processing.