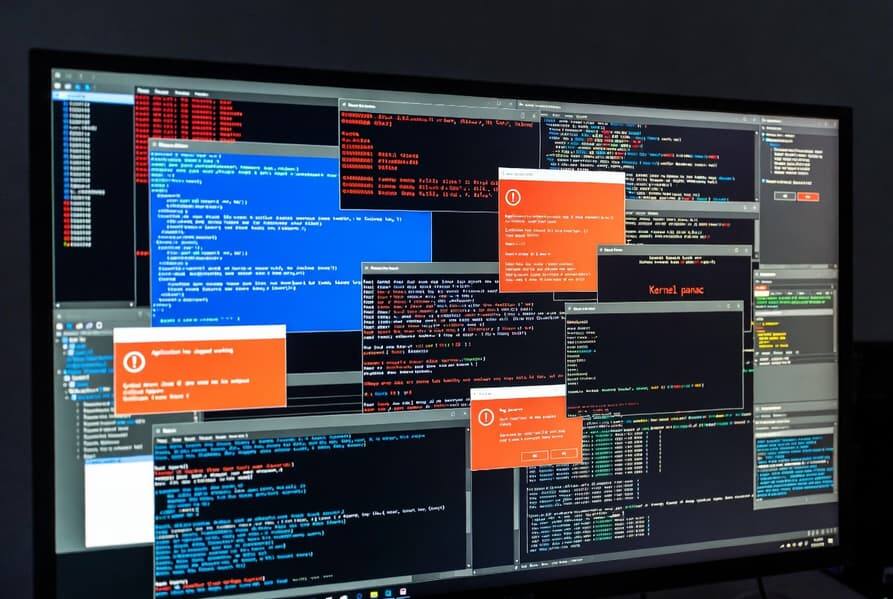

Artificial intelligence is turning vulnerability hunting into a volume problem. Software teams are now getting so many bug reports from AI tools that some small projects cannot keep up with the fixes, even before Anthropic’s latest model, Mythos, has fully arrived on the scene.

The shift is awkwardly predictable. Better scanners find more problems, but the human side of the equation has not magically expanded, which leaves open-source maintainers and small dev teams stuck triaging a flood of security reports instead of shipping code.

Open source teams are absorbing the shock first

The pain is hitting smaller projects hardest because they rarely have spare security staff sitting around waiting for a crisis. If an AI can surface defects faster than a team can validate and patch them, the result is not more safety by default – it is backlog, fatigue, and a lot of very busy maintainers.

cURL is a handy example. The project said last year that bug notifications had doubled, and in less than four months of 2026 the number had already reached half of all of last year’s total. That’s a neat way of saying the curve is still bending in the wrong direction.

Anthropic’s Mythos is not the whole story

Anthropic’s Mythos has made noise in this area, but the model is still limited to selected partners. The bigger point is that the pressure is coming from AI-assisted discovery generally, not from one headline-grabbing system, and that makes this a much broader problem for software security teams.

- AI finds more vulnerabilities.

- Small teams have fewer people to verify and fix them.

- Open-source maintainers are getting hit first.

The next bottleneck is triage

The obvious next step is not just better detection, but better filtering: separating real security issues from noisy reports, duplicates, and the kind of edge cases that eat entire afternoons. If that doesn’t improve, AI may become less of a bug hunter and more of a very efficient way to overwhelm the people doing the fixing.