People are wary of dropping private photos into cloud services. DuckDuckGo is banking on that fear with a new tool: Duck.ai can now edit images from user prompts, for free and without creating an account. It sounds like a tidy answer to a growing privacy problem – but the reality is more nuanced.

What just changed

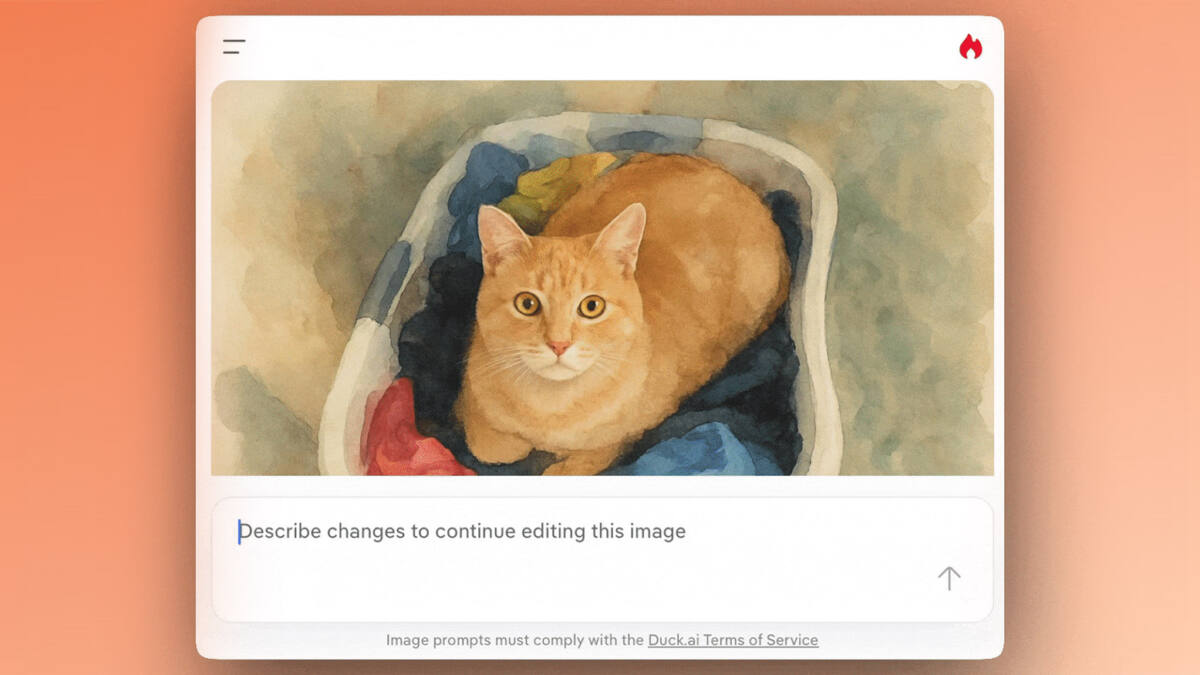

Duck.ai’s image-editing feature lets you start with an image by clicking a menu option or dragging files (JPEG, JPG, PNG, or WebP) into the prompt box, then describing the changes you want. Under the hood, the edits are produced by an OpenAI model. Duck.ai removes image metadata and the user’s IP address before sending prompts to the model, and subscribers receive higher daily limits than free users. Duck.ai also stresses that ”uploaded images are stored locally on your device.”

Why this matters now

AI image editing is no longer exotic. Cloud services from major AI vendors and creative apps already let users remove backgrounds, inpaint, relight scenes and generate new content from photos. What sets Duck.ai apart is the positioning: the product is explicitly pitched at people who want AI tools but don’t want those tools baked into their search history or tied to an always-on profile.

That pitch plays into two fast-moving trends. First, consumers are more sensitive about sending personal media to powerful models. Second, there’s a commercial scramble to add AI features to everyday utilities (search, messaging, photo editing) while avoiding the backlash that followed early, blunt integrations.

The privacy trade-offs

DuckDuckGo’s privacy moves are meaningful but limited. Stripping metadata and masking IP addresses reduces accidental leaks of location or device information. Saying images are kept ”locally on your device” is reassuring – up to the point you opt to send them to a model for editing.

Once an image is handed to a third-party model provider, the visual content itself is exposed to that provider. Duck.ai uses an OpenAI model, so edited pixels travel beyond your device even if identifying metadata does not. That’s a crucial distinction between privacy theater and privacy engineering.

What Duck.ai didn’t (yet) answer

Tools that generate or edit images are increasingly being paired with provenance standards such as C2PA to show when an image was AI-altered. DuckDuckGo has not confirmed whether edited images will carry C2PA-compliant metadata or other provenance markings. That matters for downstream uses – from social sharing to journalistic workflows.

Who wins and who loses

Privacy-minded consumers and anyone who wants quick edits without signing up for another service win. DuckDuckGo itself has a shot at converting casual users into paid subscribers via higher daily limits. OpenAI benefits too: the company gets more model usage without building a consumer-facing editor itself.

Where losses could show up is trust. If users infer ”privacy-first” equals absolute privacy and later discover model providers saw image content, reputational damage can follow. Competitors that can offer on-device editing or stronger provenance guarantees may steal the privacy crown.

How this fits into the broader market

Many companies are offering similar capabilities but with different trade-offs: some push cloud-first workflows that maximize quality and scale, while others invest in on-device processing to reduce data exposure. Expect those lines to harden: privacy-focused players will keep advertising limited telemetry, while big AI vendors will lean on accuracy and feature breadth.

Verdict and what to watch

Duck.ai’s image editor is a tidy, sensible product for people who want edits without a new account or obvious metadata leaks. But ”privacy-first” is a relative term: the visual content still travels to a model provider, and provenance support is unresolved. If you handle sensitive photos, assume content may be exposed unless the editor explicitly guarantees on-device processing or cryptographic assurances.

Watch for three moves next: Duck.ai clarifying provenance and C2PA support, a possible push toward on-device models or optional local-only editing, and competing services offering clearer guarantees (or trade-offs) around where model inference happens. In other words: read the fine print before you hit send on a photo.