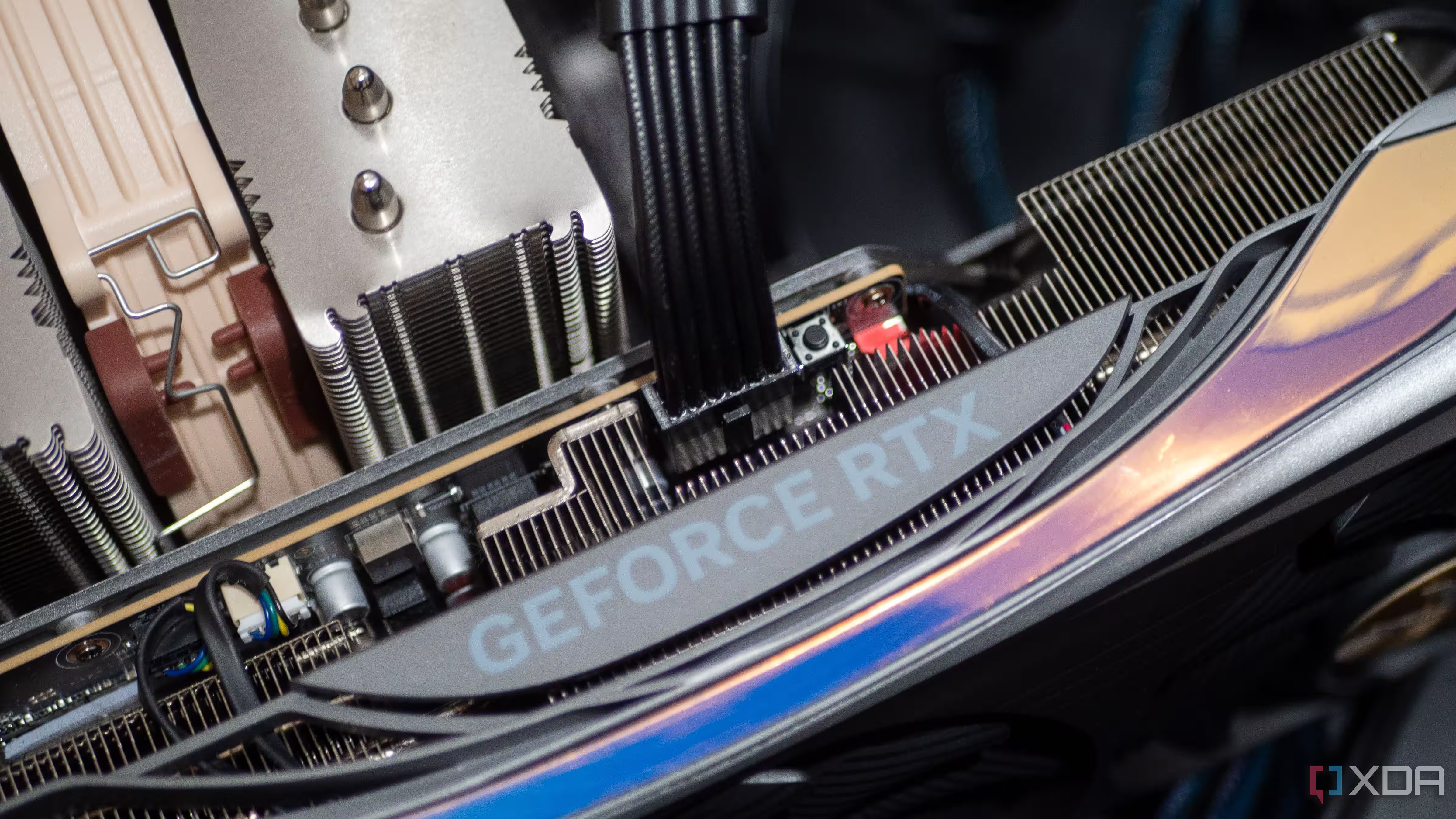

Despite being over five years old, Nvidia’s RTX 3090 continues to dominate as the top choice for local AI workloads in 2026. Its unique combination of high VRAM, solid performance, and affordability makes it the standout option for AI enthusiasts building home workstations, even as newer GPUs push the boundaries in gaming and raw speed.

While the latest Nvidia and AMD graphics cards boast advanced architectures, none offer the same VRAM-per-dollar ratio the RTX 3090 delivers. With 24GB of GDDR6X memory, this GPU comfortably accommodates large language models (LLMs) with tens of billions of parameters, enabling users to load entire models without memory bottlenecks-something that often limits newer cards that trade VRAM for raw speed.

The RTX 3090’s 384-bit memory interface and 936 GB/s bandwidth remain impressive, ensuring smooth performance across demanding AI tasks such as image generation, proprietary code execution, and automation agent management. Even Nvidia’s RTX 5090, which sports 32GB of GDDR7 memory, falls short on cost efficiency-the newer card’s exorbitant price puts it out of reach for most hobbyists and smaller teams.

Beyond VRAM, the RTX 3090’s Ampere architecture with 10,496 CUDA cores still delivers strong performance on modern AI frameworks. Its third-generation Tensor cores support popular mixed-precision formats like FP16 and BF16, allowing it to accelerate both training and inference in a wide range of AI workloads. While newer GPUs outperform it in raw speed, the mature software ecosystem around the RTX 3090 provides reliability and optimized support that some newer cards can’t match yet.

Among competitors, AMD’s RX 7900 XTX matches the RTX 3090’s VRAM capacity at 24GB and shares a similar price range on the used market. However, Nvidia retains a clear edge due to the widespread support of CUDA in AI applications, which remains more mature and flexible than AMD’s ROCm ecosystem, especially for proprietary and advanced models.

Pricing solidifies the RTX 3090’s lead. On the used market, they are available for:

- $600 to $800 for a used RTX 3090, approximately a 60% discount from the original $1,500 launch price

- Over $2,000 for used RTX 4090 or RTX 5090 models

- Upwards of $3,800 for a brand-new RTX 5090

This affordability means you could buy two RTX 3090s for less than the price of one RTX 5090, enabling multi-GPU setups that significantly enhance AI workload capabilities.

In essence, the RTX 3090 is the sweet spot for those seeking a potent, VRAM-rich, and cost-effective GPU solution for local AI. Its blend of mature hardware, comprehensive CUDA support, and excellent price-value ratio keeps it ahead of the curve, making it the go-to card for many AI enthusiasts who want to skip cloud subscriptions and run large models on their own machines.