Nvidia has officially begun shipping its DGX Station workstation, first unveiled at the GTC 2025 conference. Targeted at software developers, researchers, and data scientists, the DGX Station delivers significantly more power than the compact DGX Spark model. Preorders are already open with top OEMs like Asus, Dell, Gigabyte, MSI, Supermicro, and HP, with shipments expected to start within a few months.

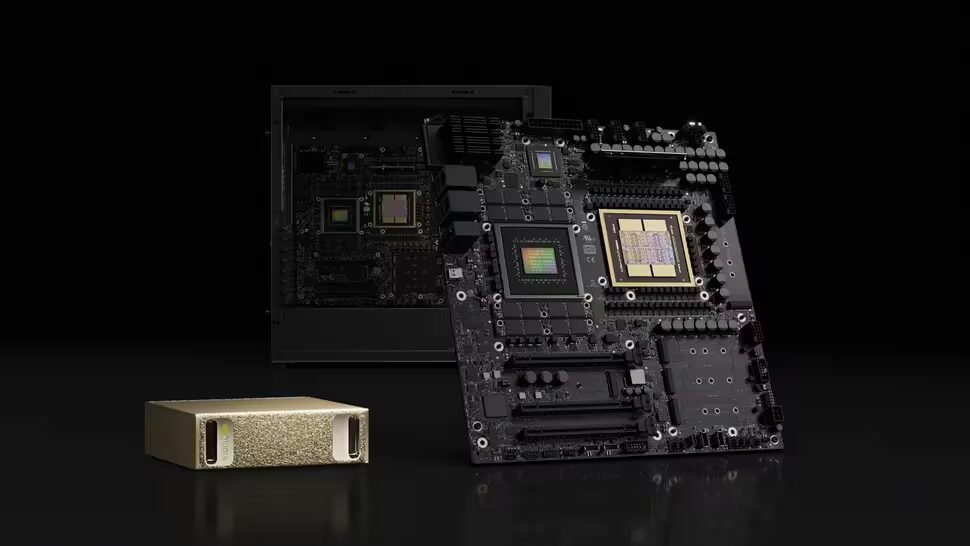

At the heart of the DGX Station is Nvidia’s GB300 Grace Blackwell Ultra Superchip. This desktop ”superchip” combines a 72-core Grace CPU with a Blackwell Ultra GPU connected via a high-speed NVLink C2C interface capable of 900 GB/s. The system boasts 784 GB of unified memory: 496 GB of LPDDR5X for the CPU (396 GB/s bandwidth) and 252 GB of HBM3e dedicated to the GPU (7.1 TB/s bandwidth). This architecture allows the CPU and GPU memory pools to function as a single shared resource, giving them direct access to each other’s memory-critical for AI workloads that outgrow individual memory pools.

Unlike closed-box solutions, the DGX Station includes three PCIe Gen 5 x16 slots-one with 16 lanes and two with eight lanes each. These slots support discrete graphics cards such as the RTX Pro 6000 Workstation Edition, RTX Pro 6000 Blackwell Max-Q, RTX Pro 4000 Blackwell SFF, and RTX Pro 2000 Blackwell. This flexibility enables the DGX Station to handle a broad range of tasks, including AI training, simulation, rendering, and ray tracing.

Networking is powered by an Nvidia ConnectX-8 SuperNIC adapter with dual QSFP112 ports, delivering up to 800 Gbps bandwidth. Two DGX Stations can be linked to scale up compute-heavy simulations and AI workloads.

Storage options include four M.2 slots, along with standard audio and USB ports. The power supply uses a combination of a 24-pin ATX, an 8-pin EPS, and three 12V-2×6 connectors for the GPUs, with total system power consumption rated at 1600 watts.

The DGX Station fits between Nvidia’s small-footprint AI accelerators like the DGX Spark and its full-size rack-mounted DGX servers. It marks Nvidia’s first desktop supercomputer leveraging the GB300 unified memory architecture in a form factor accessible to individual researchers and small labs. While pricing hasn’t been officially announced, analysts estimate a starting price around $40,000 to $50,000-a level far from consumer reach but attractive for corporate R&D and academic institutions.

Here is a summary of the DGX Station pricing estimates and availability:

- Preorders open with OEMs: Asus, Dell, Gigabyte, MSI, Supermicro, HP

- Shipments expected within a few months from launch

- Estimated starting price: $40,000 to $50,000

Compared to offerings from Apple’s M-series chips or AMD’s AI-capable processors, Nvidia’s DGX Station uniquely targets the intersection of CPU-GPU unified memory in a workstation optimized for large-scale AI and data workloads. The inclusion of versatile PCIe slots and high-end network adapters further distinguishes it from the typical compact AI systems currently available.

Looking ahead, the DGX Station could reshape how midsize research teams and enterprises deploy AI hardware, bridging the gap between portable accelerators and bulky server racks. Key to watch will be how effectively Nvidia’s unified memory architecture accelerates real-world AI projects and whether broad OEM adoption drives wider availability or pricing adjustments in future iterations.