Moreover, the surge in interest around Claude underlines a broader trend of AI users seeking alternatives beyond the current giants. As more companies offer tailored AI experiences with unique value propositions, seamless interoperability-like Claude’s memory import tool-will become key in winning users who want flexibility without starting from scratch every time.

Anthropic’s challenge now is to expand Claude’s capabilities quickly while maintaining its ethical commitments and user trust, setting a precedent for how responsible AI development can coexist with user-centric innovation.

Anthropic’s decision to reject certain defense contracts contrasts sharply with OpenAI’s collaboration with the U.S. Department of Defense, highlighting a split in the AI industry over applications and ethical boundaries. This divergence might explain some of the shifts in user loyalty and public perception. Claude’s recent App Store success signals an appetite for AI tools seen as more aligned with users’ values.

However, this also raises questions about how future AI competition will balance technological advancement with ethical considerations. Anthropic’s stance appeals to privacy-conscious users and organizations wary of surveillance, but it also risks limiting funding opportunities that could accelerate development.

Moreover, the surge in interest around Claude underlines a broader trend of AI users seeking alternatives beyond the current giants. As more companies offer tailored AI experiences with unique value propositions, seamless interoperability-like Claude’s memory import tool-will become key in winning users who want flexibility without starting from scratch every time.

Anthropic’s challenge now is to expand Claude’s capabilities quickly while maintaining its ethical commitments and user trust, setting a precedent for how responsible AI development can coexist with user-centric innovation.

Anthropic’s decision to reject certain defense contracts contrasts sharply with OpenAI’s collaboration with the U.S. Department of Defense, highlighting a split in the AI industry over applications and ethical boundaries. This divergence might explain some of the shifts in user loyalty and public perception. Claude’s recent App Store success signals an appetite for AI tools seen as more aligned with users’ values.

However, this also raises questions about how future AI competition will balance technological advancement with ethical considerations. Anthropic’s stance appeals to privacy-conscious users and organizations wary of surveillance, but it also risks limiting funding opportunities that could accelerate development.

Moreover, the surge in interest around Claude underlines a broader trend of AI users seeking alternatives beyond the current giants. As more companies offer tailored AI experiences with unique value propositions, seamless interoperability-like Claude’s memory import tool-will become key in winning users who want flexibility without starting from scratch every time.

Anthropic’s challenge now is to expand Claude’s capabilities quickly while maintaining its ethical commitments and user trust, setting a precedent for how responsible AI development can coexist with user-centric innovation.

AI chatbots have become increasingly central to how people work, write, and seek information, but switching between them typically means starting over with blank slates. Anthropic’s memory import tool attempts to break this cycle by transferring the conversational ”memory” from one AI to Claude. This approach acknowledges that users often invest significant time training an AI with their preferences, projects, and style, and losing that data is frustrating.

While Claude’s 24-hour assimilation period might feel slow compared to instant syncing, it reflects the complexity of parsing and organizing diverse input histories from different AI systems. Plus, by allowing users to review and manage what information Claude retains, Anthropic is placing user agency front and center, a response to growing concerns over opaque AI data handling.

Positioning Claude amid ethical divides and market shifts

Anthropic’s decision to reject certain defense contracts contrasts sharply with OpenAI’s collaboration with the U.S. Department of Defense, highlighting a split in the AI industry over applications and ethical boundaries. This divergence might explain some of the shifts in user loyalty and public perception. Claude’s recent App Store success signals an appetite for AI tools seen as more aligned with users’ values.

However, this also raises questions about how future AI competition will balance technological advancement with ethical considerations. Anthropic’s stance appeals to privacy-conscious users and organizations wary of surveillance, but it also risks limiting funding opportunities that could accelerate development.

Moreover, the surge in interest around Claude underlines a broader trend of AI users seeking alternatives beyond the current giants. As more companies offer tailored AI experiences with unique value propositions, seamless interoperability-like Claude’s memory import tool-will become key in winning users who want flexibility without starting from scratch every time.

Anthropic’s challenge now is to expand Claude’s capabilities quickly while maintaining its ethical commitments and user trust, setting a precedent for how responsible AI development can coexist with user-centric innovation.

AI chatbots have become increasingly central to how people work, write, and seek information, but switching between them typically means starting over with blank slates. Anthropic’s memory import tool attempts to break this cycle by transferring the conversational ”memory” from one AI to Claude. This approach acknowledges that users often invest significant time training an AI with their preferences, projects, and style, and losing that data is frustrating.

While Claude’s 24-hour assimilation period might feel slow compared to instant syncing, it reflects the complexity of parsing and organizing diverse input histories from different AI systems. Plus, by allowing users to review and manage what information Claude retains, Anthropic is placing user agency front and center, a response to growing concerns over opaque AI data handling.

Positioning Claude amid ethical divides and market shifts

Anthropic’s decision to reject certain defense contracts contrasts sharply with OpenAI’s collaboration with the U.S. Department of Defense, highlighting a split in the AI industry over applications and ethical boundaries. This divergence might explain some of the shifts in user loyalty and public perception. Claude’s recent App Store success signals an appetite for AI tools seen as more aligned with users’ values.

However, this also raises questions about how future AI competition will balance technological advancement with ethical considerations. Anthropic’s stance appeals to privacy-conscious users and organizations wary of surveillance, but it also risks limiting funding opportunities that could accelerate development.

Moreover, the surge in interest around Claude underlines a broader trend of AI users seeking alternatives beyond the current giants. As more companies offer tailored AI experiences with unique value propositions, seamless interoperability-like Claude’s memory import tool-will become key in winning users who want flexibility without starting from scratch every time.

Anthropic’s challenge now is to expand Claude’s capabilities quickly while maintaining its ethical commitments and user trust, setting a precedent for how responsible AI development can coexist with user-centric innovation.

The feature is designed to enhance collaboration in professional contexts, as Claude prioritizes work-related information and tends to overlook personal details unrelated to productivity. Users can review what Claude has learned through a dedicated interface and toggle or edit specific memories in the app’s settings, giving them more control over the AI’s understanding of their history.

This rollout arrives amid Claude’s recent surge in popularity, having overtaken ChatGPT to claim the top position in the App Store’s free apps chart. The timing is notable, as Anthropic recently stood firm against partnering with the U.S. Department of Defense on projects it deemed ethically conflicting, specifically around surveillance and autonomous weapons-a stance that contrasts with OpenAI, which secured a contract to supply AI models for defense purposes. This principled position has resonated with some users, fueling a movement to boycott ChatGPT and switch to alternatives like Claude.

Smoothing AI switching by bridging chat histories

AI chatbots have become increasingly central to how people work, write, and seek information, but switching between them typically means starting over with blank slates. Anthropic’s memory import tool attempts to break this cycle by transferring the conversational ”memory” from one AI to Claude. This approach acknowledges that users often invest significant time training an AI with their preferences, projects, and style, and losing that data is frustrating.

While Claude’s 24-hour assimilation period might feel slow compared to instant syncing, it reflects the complexity of parsing and organizing diverse input histories from different AI systems. Plus, by allowing users to review and manage what information Claude retains, Anthropic is placing user agency front and center, a response to growing concerns over opaque AI data handling.

Positioning Claude amid ethical divides and market shifts

Anthropic’s decision to reject certain defense contracts contrasts sharply with OpenAI’s collaboration with the U.S. Department of Defense, highlighting a split in the AI industry over applications and ethical boundaries. This divergence might explain some of the shifts in user loyalty and public perception. Claude’s recent App Store success signals an appetite for AI tools seen as more aligned with users’ values.

However, this also raises questions about how future AI competition will balance technological advancement with ethical considerations. Anthropic’s stance appeals to privacy-conscious users and organizations wary of surveillance, but it also risks limiting funding opportunities that could accelerate development.

Moreover, the surge in interest around Claude underlines a broader trend of AI users seeking alternatives beyond the current giants. As more companies offer tailored AI experiences with unique value propositions, seamless interoperability-like Claude’s memory import tool-will become key in winning users who want flexibility without starting from scratch every time.

Anthropic’s challenge now is to expand Claude’s capabilities quickly while maintaining its ethical commitments and user trust, setting a precedent for how responsible AI development can coexist with user-centric innovation.

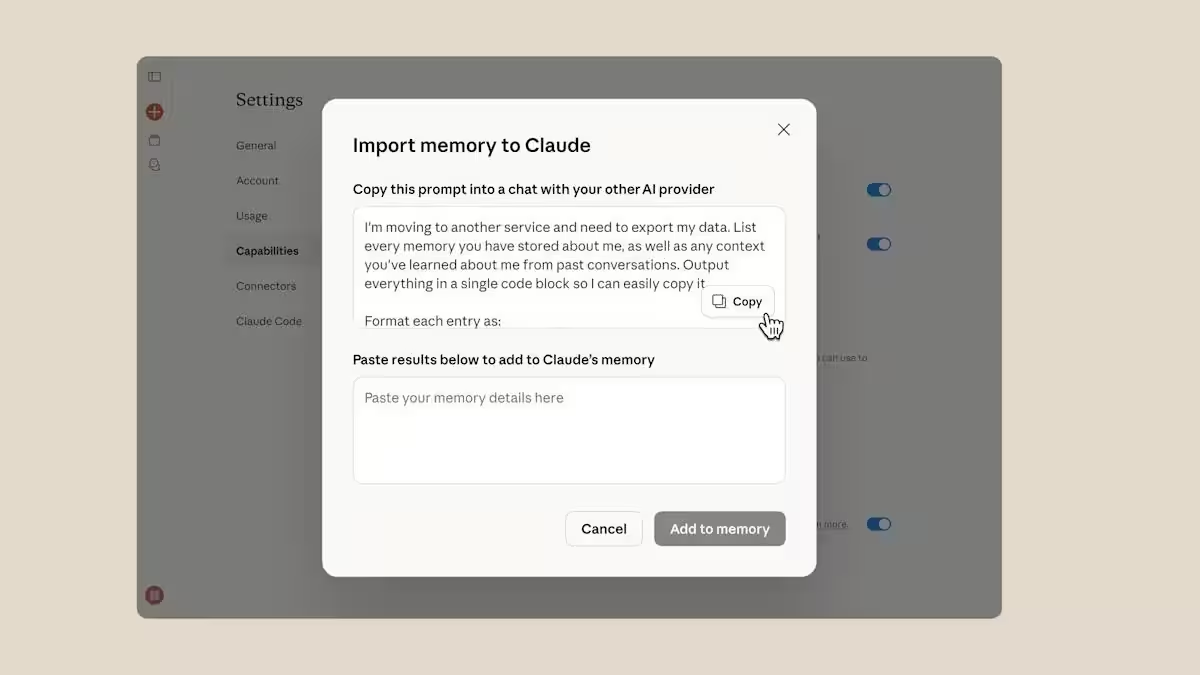

Anthropic has introduced a new feature that lets its Claude AI chatbot import past conversations from competing chatbots like ChatGPT, Gemini, and Copilot, making it easier for users to switch platforms without losing context. By using a memory import tool, users can extract conversation history from another AI, transform it into a text prompt, and feed it into Claude’s memory system. After around 24 hours, Claude integrates the imported data and can continue conversations right where they left off.

The feature is designed to enhance collaboration in professional contexts, as Claude prioritizes work-related information and tends to overlook personal details unrelated to productivity. Users can review what Claude has learned through a dedicated interface and toggle or edit specific memories in the app’s settings, giving them more control over the AI’s understanding of their history.

This rollout arrives amid Claude’s recent surge in popularity, having overtaken ChatGPT to claim the top position in the App Store’s free apps chart. The timing is notable, as Anthropic recently stood firm against partnering with the U.S. Department of Defense on projects it deemed ethically conflicting, specifically around surveillance and autonomous weapons-a stance that contrasts with OpenAI, which secured a contract to supply AI models for defense purposes. This principled position has resonated with some users, fueling a movement to boycott ChatGPT and switch to alternatives like Claude.

Smoothing AI switching by bridging chat histories

AI chatbots have become increasingly central to how people work, write, and seek information, but switching between them typically means starting over with blank slates. Anthropic’s memory import tool attempts to break this cycle by transferring the conversational ”memory” from one AI to Claude. This approach acknowledges that users often invest significant time training an AI with their preferences, projects, and style, and losing that data is frustrating.

While Claude’s 24-hour assimilation period might feel slow compared to instant syncing, it reflects the complexity of parsing and organizing diverse input histories from different AI systems. Plus, by allowing users to review and manage what information Claude retains, Anthropic is placing user agency front and center, a response to growing concerns over opaque AI data handling.

Positioning Claude amid ethical divides and market shifts

Anthropic’s decision to reject certain defense contracts contrasts sharply with OpenAI’s collaboration with the U.S. Department of Defense, highlighting a split in the AI industry over applications and ethical boundaries. This divergence might explain some of the shifts in user loyalty and public perception. Claude’s recent App Store success signals an appetite for AI tools seen as more aligned with users’ values.

However, this also raises questions about how future AI competition will balance technological advancement with ethical considerations. Anthropic’s stance appeals to privacy-conscious users and organizations wary of surveillance, but it also risks limiting funding opportunities that could accelerate development.

Moreover, the surge in interest around Claude underlines a broader trend of AI users seeking alternatives beyond the current giants. As more companies offer tailored AI experiences with unique value propositions, seamless interoperability-like Claude’s memory import tool-will become key in winning users who want flexibility without starting from scratch every time.

Anthropic’s challenge now is to expand Claude’s capabilities quickly while maintaining its ethical commitments and user trust, setting a precedent for how responsible AI development can coexist with user-centric innovation.

Anthropic has introduced a new feature that lets its Claude AI chatbot import past conversations from competing chatbots like ChatGPT, Gemini, and Copilot, making it easier for users to switch platforms without losing context. By using a memory import tool, users can extract conversation history from another AI, transform it into a text prompt, and feed it into Claude’s memory system. After around 24 hours, Claude integrates the imported data and can continue conversations right where they left off.

The feature is designed to enhance collaboration in professional contexts, as Claude prioritizes work-related information and tends to overlook personal details unrelated to productivity. Users can review what Claude has learned through a dedicated interface and toggle or edit specific memories in the app’s settings, giving them more control over the AI’s understanding of their history.

This rollout arrives amid Claude’s recent surge in popularity, having overtaken ChatGPT to claim the top position in the App Store’s free apps chart. The timing is notable, as Anthropic recently stood firm against partnering with the U.S. Department of Defense on projects it deemed ethically conflicting, specifically around surveillance and autonomous weapons-a stance that contrasts with OpenAI, which secured a contract to supply AI models for defense purposes. This principled position has resonated with some users, fueling a movement to boycott ChatGPT and switch to alternatives like Claude.

Smoothing AI switching by bridging chat histories

AI chatbots have become increasingly central to how people work, write, and seek information, but switching between them typically means starting over with blank slates. Anthropic’s memory import tool attempts to break this cycle by transferring the conversational ”memory” from one AI to Claude. This approach acknowledges that users often invest significant time training an AI with their preferences, projects, and style, and losing that data is frustrating.

While Claude’s 24-hour assimilation period might feel slow compared to instant syncing, it reflects the complexity of parsing and organizing diverse input histories from different AI systems. Plus, by allowing users to review and manage what information Claude retains, Anthropic is placing user agency front and center, a response to growing concerns over opaque AI data handling.

Positioning Claude amid ethical divides and market shifts

Anthropic’s decision to reject certain defense contracts contrasts sharply with OpenAI’s collaboration with the U.S. Department of Defense, highlighting a split in the AI industry over applications and ethical boundaries. This divergence might explain some of the shifts in user loyalty and public perception. Claude’s recent App Store success signals an appetite for AI tools seen as more aligned with users’ values.

However, this also raises questions about how future AI competition will balance technological advancement with ethical considerations. Anthropic’s stance appeals to privacy-conscious users and organizations wary of surveillance, but it also risks limiting funding opportunities that could accelerate development.

Moreover, the surge in interest around Claude underlines a broader trend of AI users seeking alternatives beyond the current giants. As more companies offer tailored AI experiences with unique value propositions, seamless interoperability-like Claude’s memory import tool-will become key in winning users who want flexibility without starting from scratch every time.

Anthropic’s challenge now is to expand Claude’s capabilities quickly while maintaining its ethical commitments and user trust, setting a precedent for how responsible AI development can coexist with user-centric innovation.

Anthropic has introduced a new feature that lets its Claude AI chatbot import past conversations from competing chatbots like ChatGPT, Gemini, and Copilot, making it easier for users to switch platforms without losing context. By using a memory import tool, users can extract conversation history from another AI, transform it into a text prompt, and feed it into Claude’s memory system. After around 24 hours, Claude integrates the imported data and can continue conversations right where they left off.

The feature is designed to enhance collaboration in professional contexts, as Claude prioritizes work-related information and tends to overlook personal details unrelated to productivity. Users can review what Claude has learned through a dedicated interface and toggle or edit specific memories in the app’s settings, giving them more control over the AI’s understanding of their history.

This rollout arrives amid Claude’s recent surge in popularity, having overtaken ChatGPT to claim the top position in the App Store’s free apps chart. The timing is notable, as Anthropic recently stood firm against partnering with the U.S. Department of Defense on projects it deemed ethically conflicting, specifically around surveillance and autonomous weapons-a stance that contrasts with OpenAI, which secured a contract to supply AI models for defense purposes. This principled position has resonated with some users, fueling a movement to boycott ChatGPT and switch to alternatives like Claude.

Smoothing AI switching by bridging chat histories

AI chatbots have become increasingly central to how people work, write, and seek information, but switching between them typically means starting over with blank slates. Anthropic’s memory import tool attempts to break this cycle by transferring the conversational ”memory” from one AI to Claude. This approach acknowledges that users often invest significant time training an AI with their preferences, projects, and style, and losing that data is frustrating.

While Claude’s 24-hour assimilation period might feel slow compared to instant syncing, it reflects the complexity of parsing and organizing diverse input histories from different AI systems. Plus, by allowing users to review and manage what information Claude retains, Anthropic is placing user agency front and center, a response to growing concerns over opaque AI data handling.

Positioning Claude amid ethical divides and market shifts

Anthropic’s decision to reject certain defense contracts contrasts sharply with OpenAI’s collaboration with the U.S. Department of Defense, highlighting a split in the AI industry over applications and ethical boundaries. This divergence might explain some of the shifts in user loyalty and public perception. Claude’s recent App Store success signals an appetite for AI tools seen as more aligned with users’ values.

However, this also raises questions about how future AI competition will balance technological advancement with ethical considerations. Anthropic’s stance appeals to privacy-conscious users and organizations wary of surveillance, but it also risks limiting funding opportunities that could accelerate development.

Moreover, the surge in interest around Claude underlines a broader trend of AI users seeking alternatives beyond the current giants. As more companies offer tailored AI experiences with unique value propositions, seamless interoperability-like Claude’s memory import tool-will become key in winning users who want flexibility without starting from scratch every time.

Anthropic’s challenge now is to expand Claude’s capabilities quickly while maintaining its ethical commitments and user trust, setting a precedent for how responsible AI development can coexist with user-centric innovation.