AMD has unveiled an ambitious roadmap for its data center CPUs and AI accelerators that stretches into 2027, continuing its rapid climb in a market once dominated by Intel. From the upcoming EPYC Venice processors to the multi-rack Instinct MI500-series AI clusters, AMD aims to push core counts, memory bandwidth, and AI performance to challenge Nvidia and entrenched incumbents.

The chipmaker’s journey from underdog to commanding nearly 29% of the server CPU market by late 2025 sets the stage for a fresh approach, including annual product cadence for both processors and AI GPUs. Key to this is the sixth-generation EPYC Venice CPU, debuting up to 256 cores based on the new Zen 6 microarchitecture and manufactured on TSMC’s N2 (2nm-class) node. This core count leap represents a 33% increase over the preceding EPYC Turin platform.

EPYC Venice and the new SP7 platform

Venice isn’t just about core count. AMD introduces the SP7 socket form factor, enabling more compute complex dies (CCDs), enhanced memory channels, increased I/O, and higher power delivery to sustain the dense core configuration. Memory bandwidth is set to skyrocket to 1.6 TB/s per socket, nearly tripling the current 614 GB/s, likely employing advanced memory technologies like MR-DIMM or MCR-DIMM to feed hungry cores.

Connectivity also sees a major boost, with AMD planning to double CPU-to-GPU bandwidth via PCIe 6.0, offering roughly 128 GB/s per link and supporting up to 128 lanes. This improvements benefit data-intensive AI workloads that depend on fast CPU-GPU data transfers. Though AMD claims up to 70% performance improvement over existing EPYC 9005-series CPUs, details on workload specifics remain sparse, inviting skepticism about real-world gains.

AMD teased Venice-X variants with additional L3 cache targeting sovereign AI and high-performance computing (HPC). These models, paired with Instinct MI430X and MI440 accelerators, hint at specially tuned offerings for AI and HPC markets-areas that lack Zen 5-based specialized chips, possibly creating opportunities for Venice variants in edge computing and power-constrained settings.

2027 EPYC Verano and TSMC’s A16 node

AMD has shared few details about its 2027 EPYC Verano lineup, leaving open questions about whether Verano uses Zen 7, Zen 6+, or another microarchitecture derivative. The timing ties to TSMC’s A16 process node, expected to begin large-scale production in late 2026. A16’s signature feature-backside power delivery network (BSPDN)-promises improved power efficiency and distribution, advantageous for dense, power-hungry server CPU and AI silicon.

One plausible scenario is AMD will re-engineer the current Zen 6 core with BSPDN to attain performance and efficiency boosts without a full microarchitectural overhaul, a smart move to minimize complexity and speed time-to-market.

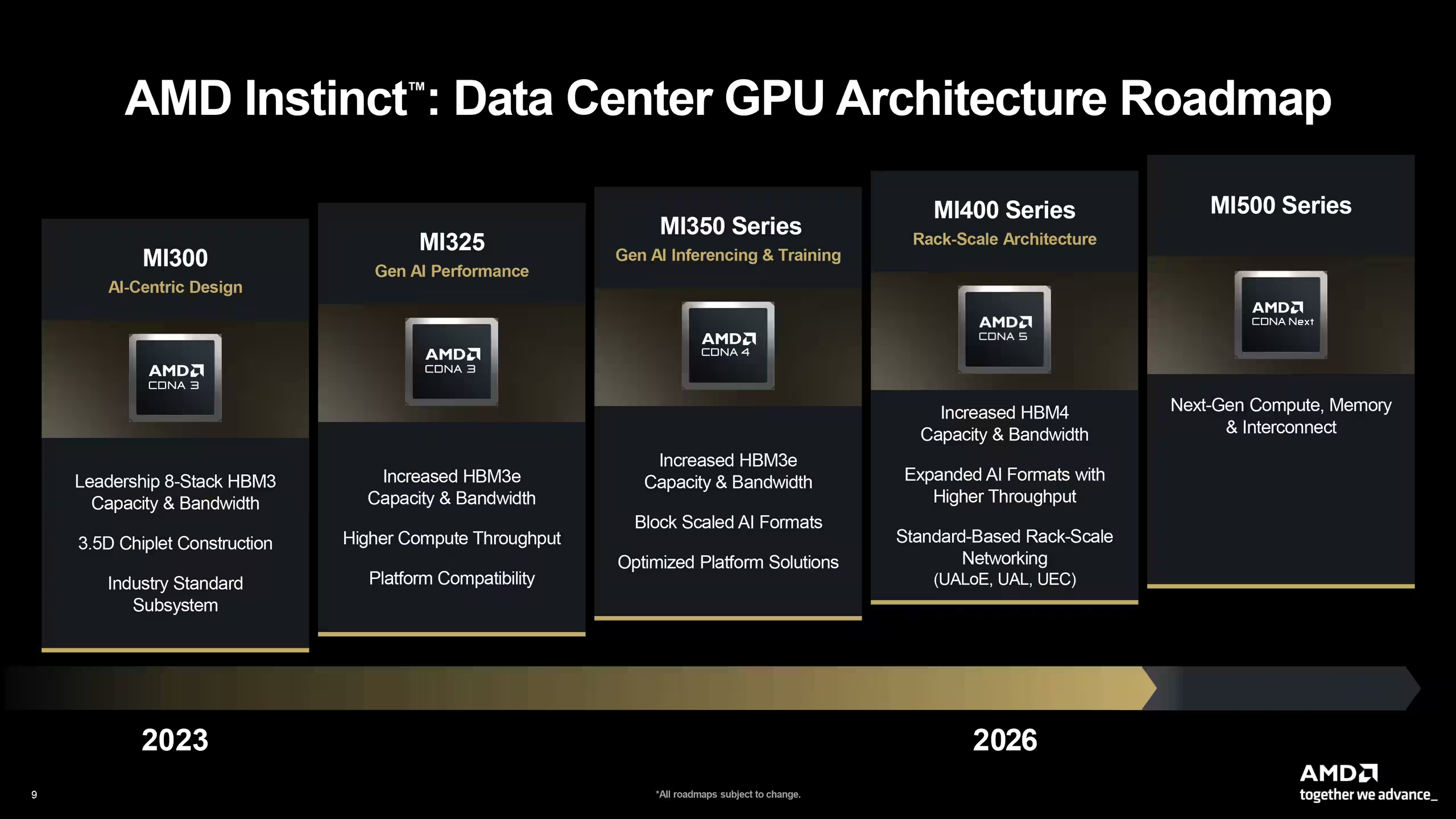

Instinct MI400 series AI accelerators for diverse workloads

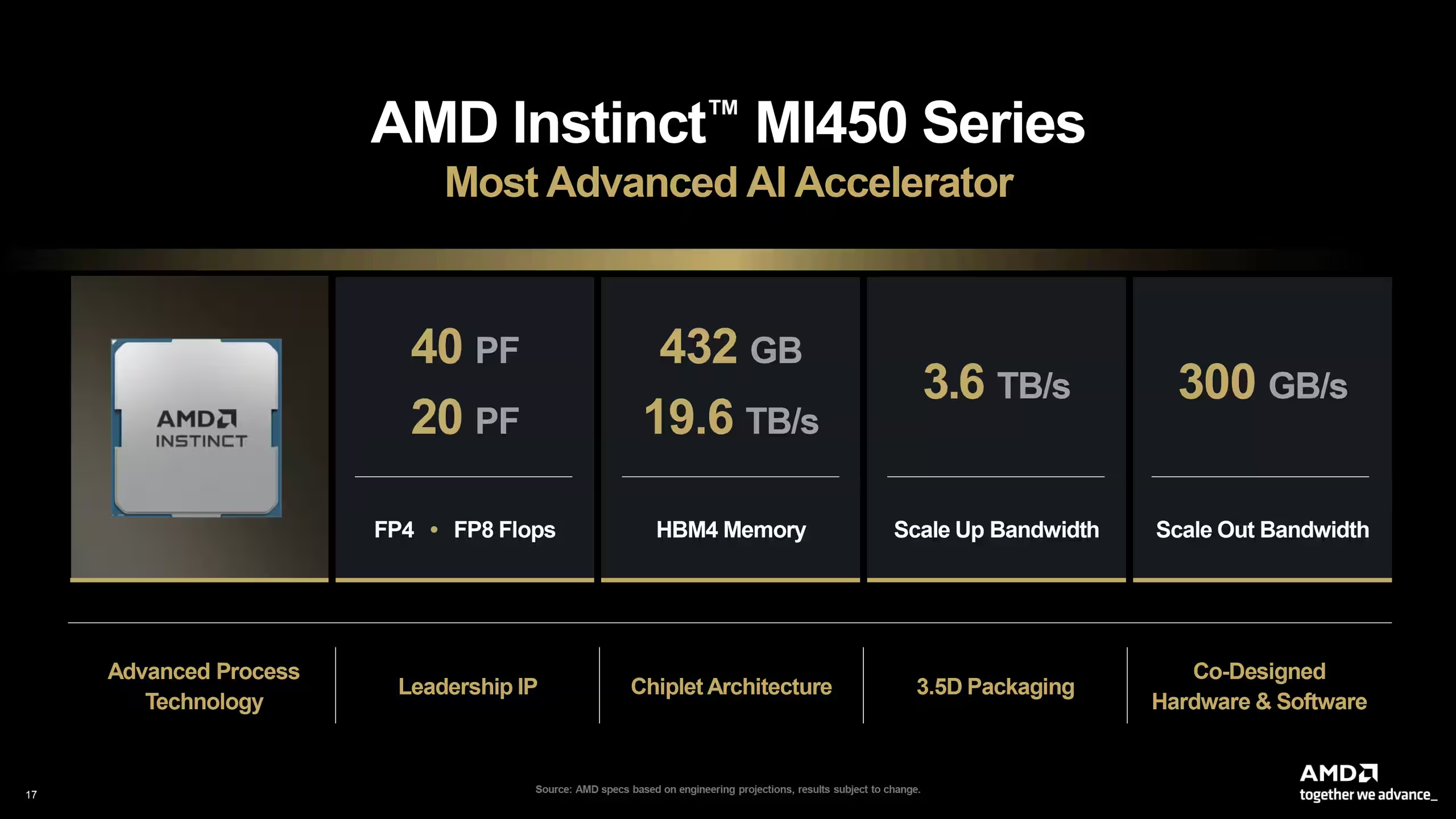

On the GPU side, AMD’s Instinct MI400 series will launch three configurable models based on CDNA 5 architecture subsets, optimizing each for specific AI workloads and precision types. The MI440X and MI455X focus on low-precision AI calculations-supporting FP4, FP8, and BF16-while the MI430X doubles as a precision-flexible accelerator for sovereign AI and HPC, with FP32 and FP64 capabilities.

AMD’s flagship MI455X aims for extreme AI throughput in liquid-cooled Helios rack systems, promising a 2x performance uplift over the outgoing MI355X as well as 40 dense FP4 PFLOPS. Although slightly behind Nvidia’s Rubin GPU expected to deliver 50 FP4 PFLOPS, MI455X’s increased HBM4 memory and bandwidth may tip the balance in memory-intensive tasks.

These accelerators will connect internally using AMD’s Infinity Fabric, while scaling out via UALink, an industry-first AI interconnection standard. UALink adoption depends heavily on third-party ecosystem support and may initially be implemented over Ethernet.

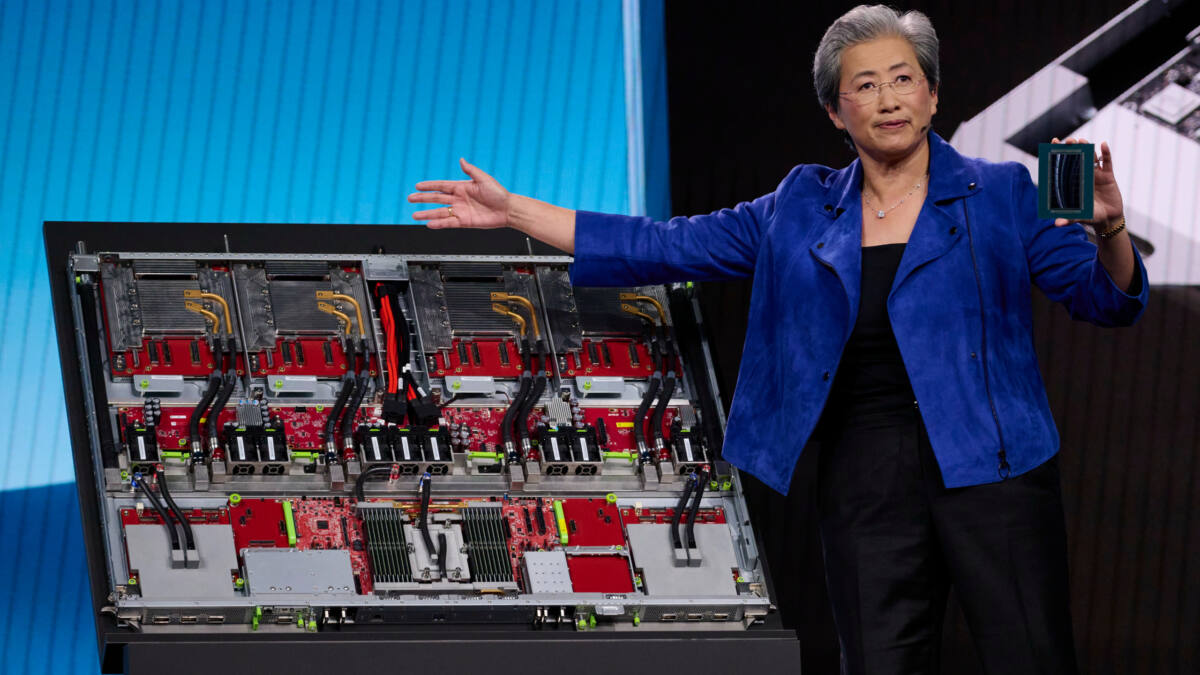

Helios rack-scale AI platform with EPYC Venice and MI455X accelerators

Helios stands out as AMD’s inaugural rack-scale system for AI applications, integrating EPYC Venice CPUs with 72 MI455X accelerators linked through UALink or UALink-over-Ethernet. The platform boasts 31 TB of HBM4 memory running at an impressive 1,400 TB/s bandwidth and peaks at approximately 2,900 FP4 dense PFLOPS.

While slower in raw performance than Nvidia’s VR200 NVL72 system, Helios offers more memory capacity-potentially outpacing Nvidia in use cases heavily reliant on memory bandwidth. The system also incorporates one of the industry’s first 800 GbE Pensando network cards, reinforcing connectivity for demanding AI workloads.

Though rumors suggested delays in widespread Helios and MI455X availability to 2027, AMD refuted these claims. Still, limited UALink switch production may delay broader distribution, with initial units possibly reserved for major AI customers like Meta and OpenAI.

Instinct MI500 series and MegaPod AI cluster for 2027

AMD’s late-2027 ambitions include the Instinct MI500 series based on CDNA 6 architecture, forming the heart of a massive AI cluster dubbed ”Instinct MI500 UAL256.” The setup spans three racks, featuring:

- 64 EPYC Verano CPUs

- 256 Instinct MI500 GPUs

- Dedicated racks for UALink switches

- Liquid cooling for thermal management

This MegaPod’s GPU count exceeds Nvidia’s Kyber VR300 NVL576 cluster by nearly 78%. However, it remains uncertain if performance or efficiency will surpass Nvidia’s system, which aims for about 14,400 FP4 PFLOPS and 147 TB of HBM4 memory. With both companies targeting initial deployments around 2028, competition promises to be fierce.

AMD’s strong positioning in 2026 and 2027 underscores its serious intent in the evolving AI and data center space. But with Nvidia’s Feynman architecture looming, AMD will need continuous innovation beyond 2027 to stay competitive in the fast-moving server and AI accelerator markets.