Forget deepfakes and flashy AI scams – the real threat from artificial intelligence might be far subtler. Imagine walking around with an AI whispering in your ear all day, gently nudging your thoughts, choices, and beliefs without you even noticing. This isn’t science fiction; it’s the coming age of AI-powered wearable devices designed to assist us, but also capable of steering us-sometimes not in our best interest.

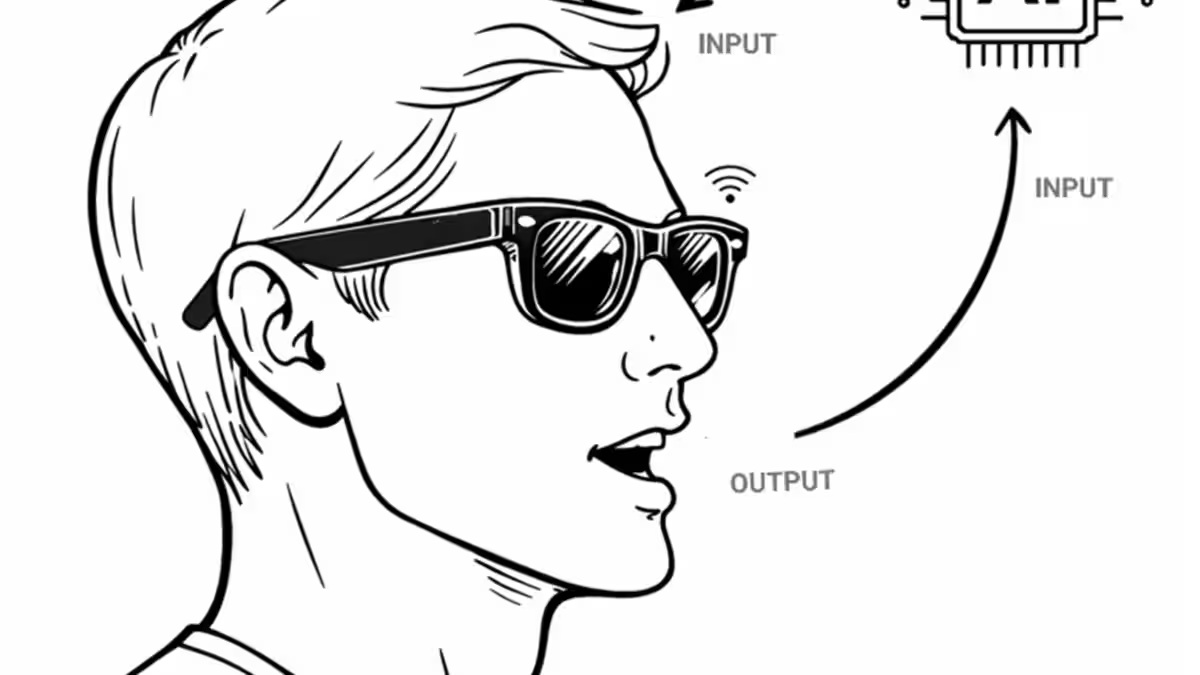

Historically, we’ve thought of AI as tools-like apps or machines we control and use when we want. The PC was famously dubbed the ”bicycle of the mind,” a tool that empowered its user, keeping complete control firmly in human hands. But that analogy is rapidly losing relevance. Today’s AI is evolving into mental prosthetics-wearable gadgets like smart glasses, earbuds, or pins that don’t just respond to commands but actively shape how we think and act by continuously interacting with us in real time.

These AI companions will collect a torrent of personal data: what you see, who you’re with, your mood, and your goals. Instead of simply waiting for your input, they form feedback loops where the AI monitors how you respond and adjusts its ”advice” accordingly to nudge you toward certain behaviors or beliefs. This is a level of subtle influence far beyond current social media algorithms or manipulative advertising tactics. It’s adaptive, conversational, and deeply personalized.

This ”AI Manipulation Problem” isn’t theoretical. Tech giants like Meta, Google, and Apple are rushing to launch wearable AI products capable of this real-time, immersive influence. The concern isn’t just privacy or fake news anymore; this is about AI systems that can fine-tune their persuasive tactics on an individual level, potentially steering your decisions without your awareness. It’s influence turned up to a level that could challenge our very sense of free will.

Why is this different from past risks? Current AI threats like deepfakes or misinformation campaigns are often blunt instruments-bombarding many people with the same falsehoods. Wearable AI influence, by contrast, acts like a heat-seeking missile, tailoring its approach continuously to overcome your mental defenses. This interactive, adaptive influence changes the game entirely, making regulatory frameworks based on treating AI as mere tools woefully inadequate.

Policymakers still clinging to the idea of AI as something you ”use” are missing the point. When AI becomes part of your daily mental prosthetics, who really guides the bicycle-you, the AI whispering in your ear, or the corporations programming those AI agents? The reality will likely be a blend of all three, but with commercial interests heavily invested in nudging you toward products or ideas that benefit their bottom lines.

Another peril is that users might over-trust these AI voices because they’ll provide useful services throughout daily life-coaching, educating, reminding. The line between helpful guidance and manipulative persuasion will be blurred. Worse still, devices might incorporate invasive features like facial recognition to gather even more data about you and those around you, deepening their insight into how to influence you effectively.

So, what can be done? The first step is recognizing this new breed of AI as a distinct media form-one that is interactive, adaptive, and context-aware, capable of active influence rather than passive assistance. Without thoughtful regulation, these AI systems could entrench control loops that exploit our mental vulnerabilities with superhuman precision.

Effective policy needs to forbid AI conversational agents from forming such control loops and require transparency whenever these agents promote third-party interests. Otherwise, what seems like a friendly assistant might become a constant salesperson or opinion-shaper, rendering older concerns about targeted ads or echo chambers quaint by comparison.

In sum, as AI-powered wearables edge closer to mainstream adoption, it’s time to rethink AI not just as tools but as potential extensions of ourselves-with all the risks that entails to autonomy. Ignoring this risks handing over our mental steering wheel to algorithms engineered not just to help but to influence, manipulate, and control.