As AI agents evolve beyond rigid programming to adapt dynamically to new data and conditions, the user interfaces meant to interact with them have stubbornly remained static-until now. A new technology called A2UI (agent-to-user interface) is breaking this mold by allowing AI agents themselves to dictate how user screens are constructed and rendered on the fly, transforming a traditionally fixed experience into a fluid, interactive dialogue.

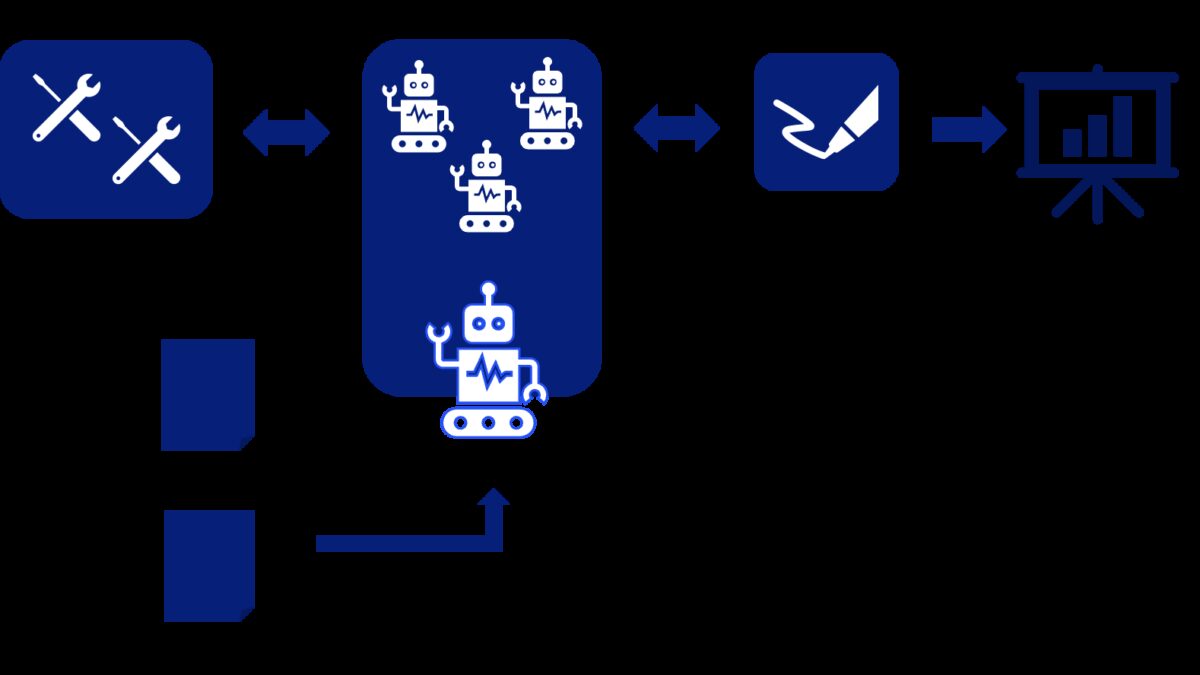

Traditional UX layers often constrain the capabilities of agentic AI, which can think and invent alternate paths when facing unforeseen scenarios. While ontologies like FIBO provide guardrails to keep AI behavior aligned with business logic, user interfaces have lagged behind, relying on pre-defined screens that limit AI’s ability to express its reasoning or adapt its outputs dynamically. Here, A2UI steps in with an architecture that decouples the interface from hardcoded designs. Instead of pre-built layouts, agents generate JSON-based specifications that a compliant renderer interprets into fully interactive user screens, linking back to agents through the AG-UI messaging protocol for real-time user-agent communication.

Consider a loan approval process: multiple source systems supply varied data about parties, conditions, interest terms, and covenants. The Financial Industry Business Ontology (FIBO) translates this data into a unified vocabulary familiar to any AI agent involved. A2UI complements this by defining how that data should appear on-screen, enabling agents to assemble user experiences in real time rather than relying on static forms set during design.

What makes this approach particularly clever is how it merges business logic with UX generation, reducing the traditional bottlenecks developers and designers face. By standardizing component rendering and interaction through a schema, reusable UI elements are defined once and employed repeatedly across different applications and workflows. Changes-from regulatory requirements to branding updates-can be handled by simply tweaking the A2UI spec or ontology rather than recoding multiple screens, making businesses more agile and resilient.

Dynamic screen generation also maintains a continuous connection between the user interface and the AI agent’s underlying logic. Button clicks, form submissions, and other events are tracked and responded to within a single pane of glass-such as a chatbot interface-offering users a seamless yet richly interactive experience. Compression techniques like Token Object Notation (TOON) further enhance efficiency by packing schema and context into compact data forms, readying these solutions for broader deployment.

From a business perspective, this shift is more than a UX upgrade. It minimizes reliance on static UI development and keeps the spotlight on business rules and domain knowledge embedded in ontologies. As companies face constant market shifts, regulatory updates, and M&A activities, A2UI’s flexible architecture allows UI changes-like swapping logos on thousands of forms-to ripple effortlessly through their digital workflows, enhancing employee productivity and compliance.

With startups like Copilotkit pioneering renderers that implement this dynamic UI strategy, and AI models progressing toward auto-generating compliant screens through pre-training, A2UI could soon become the standard for human-agent interfaces. It offers a pathway to elevate AI assistants from scripted tools to genuinely adaptive collaborators without sacrificing control or clarity for the user.

The real tension lies in how fast organizations can shift their UI development mindsets to embrace such a data-driven, schema-bound interface design, and whether existing enterprise stacks will flex accordingly. But if successful, this could mark the beginning of AI systems that not only think dynamically but also communicate through interfaces that evolve in real time alongside them.