Nvidia is recalibrating its AI hardware roadmap, highlighting a shift from its planned CPX chip toward deploying Groq 3 LPUs in tandem with its new Vera Rubin GPUs. Ian Buck, Nvidia’s VP of Hyperscale and HPC, revealed at GTC 2026 that CPX has been postponed to prioritize refining token decode performance via LPUs this year. This approach reflects Nvidia’s strategic focus on boosting AI token generation speeds economically while also introducing the agentic Vera CPU optimized for AI workloads.

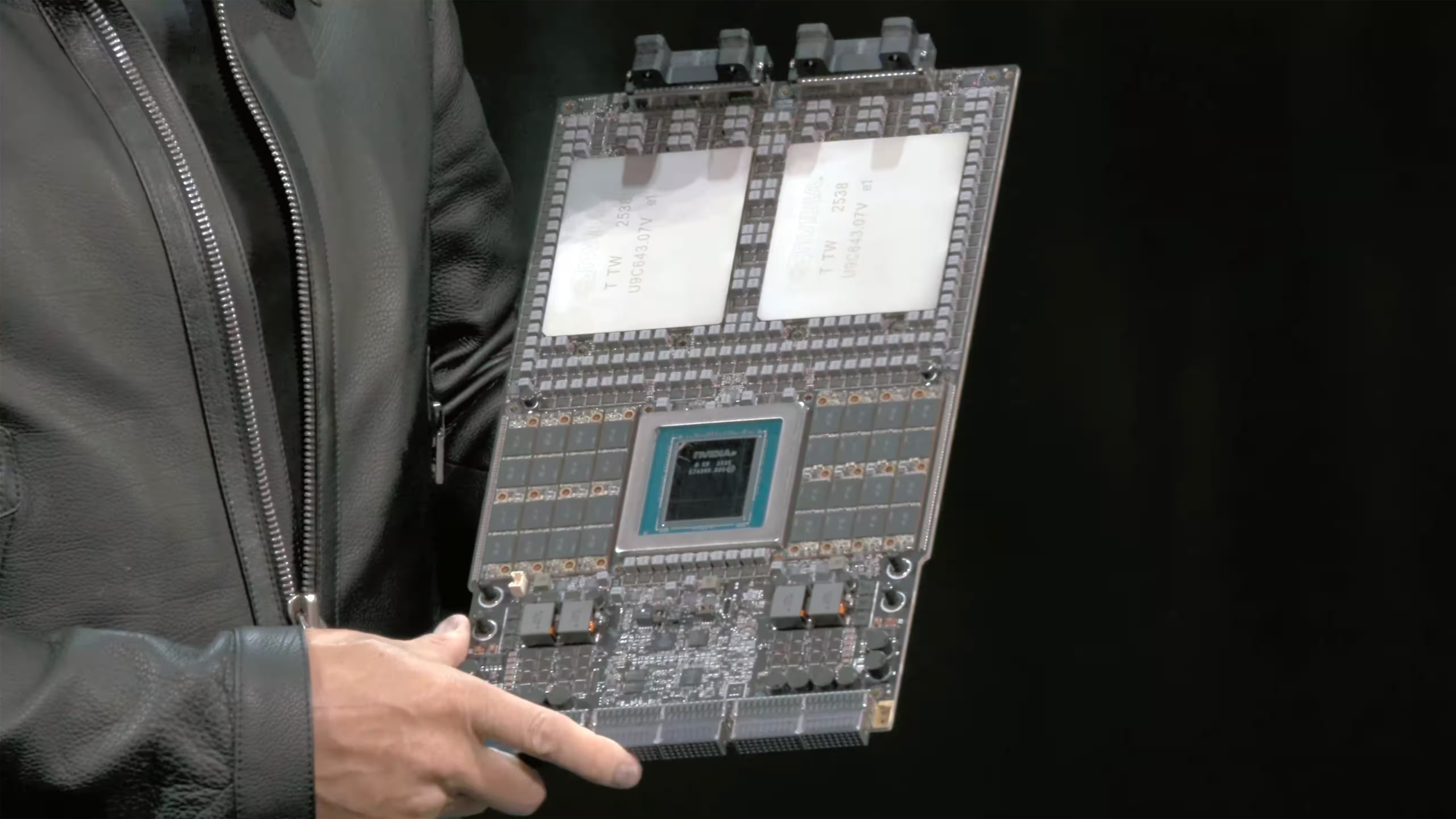

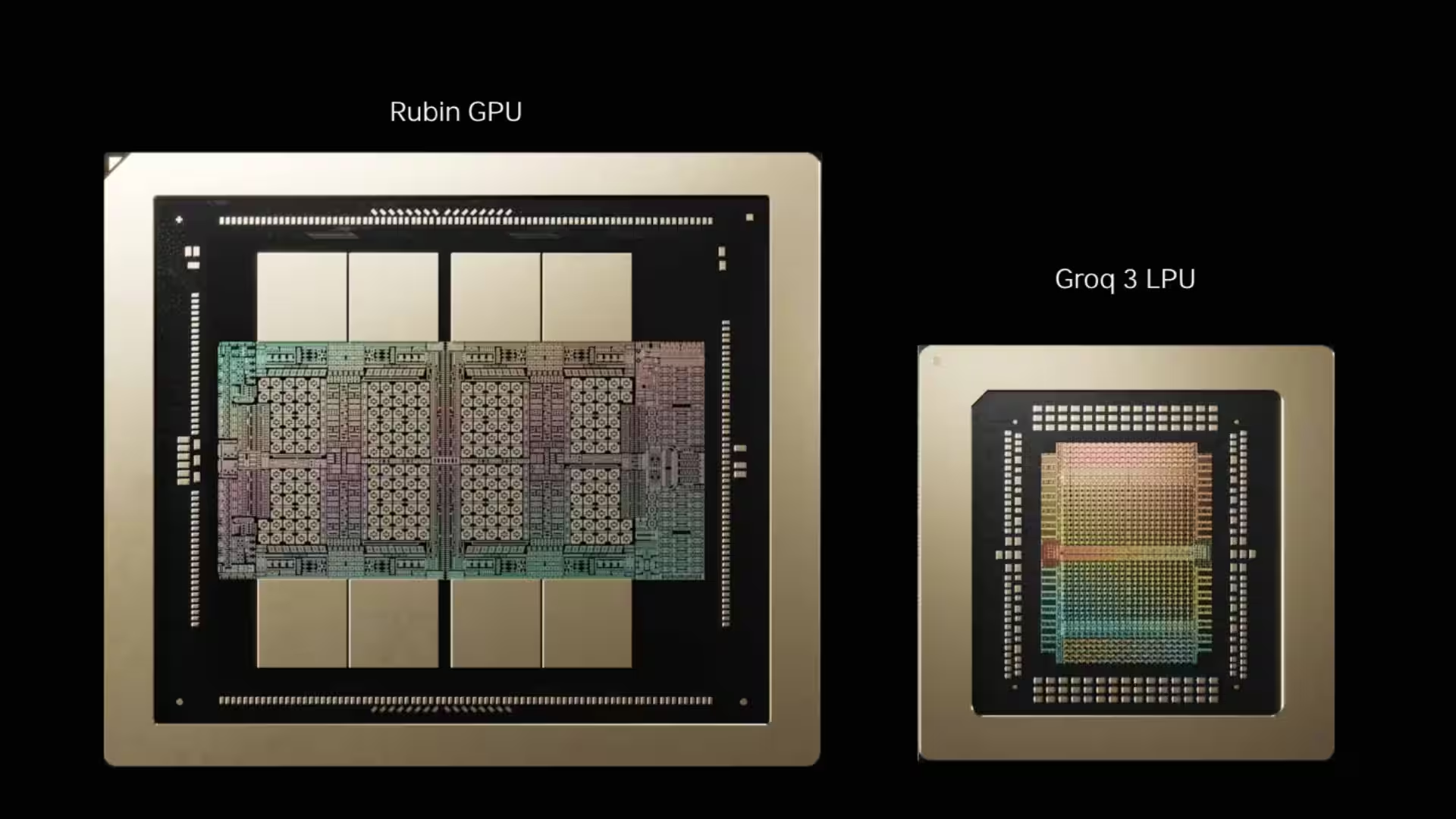

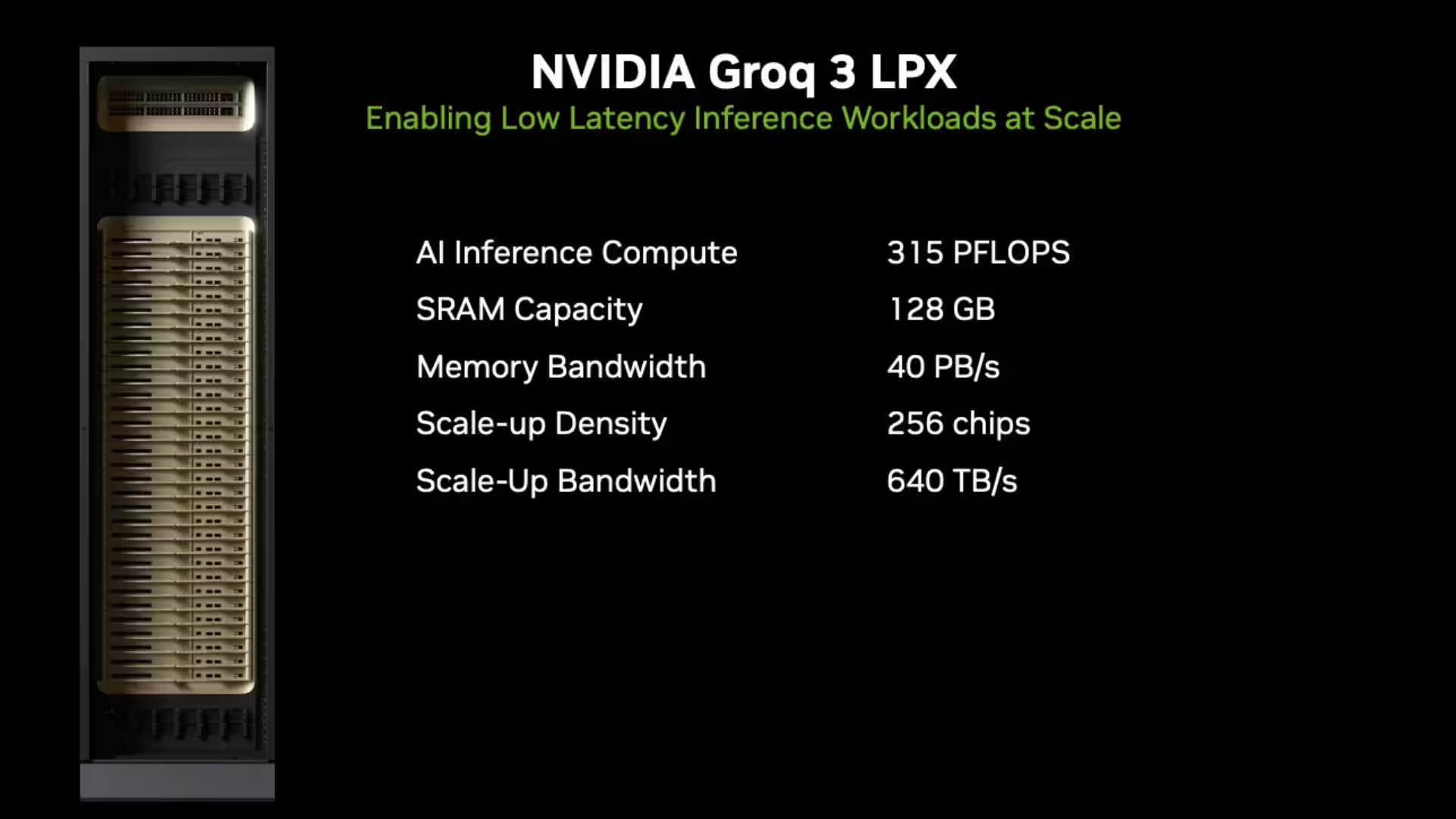

The Groq 3 LPU rack, consisting of 256 LPUs interlinked with a Vera Rubin NVL72 GPU, will handle AI model decode tasks, splitting token generation workloads between LPUs and GPUs. LPUs excel at memory-heavy operations with fast SRAM, while GPUs focus on attention calculations such as softmax and routing. This mix maximizes speed and efficiency by keeping the large KV cache in high-bandwidth memory on GPUs, reserving LPUs for critical real-time computations.

Buck emphasized Dynamo software’s critical role in orchestrating this hybrid architecture, fostering an active developer community with substantial external contributions. Dynamo manages disaggregated GPU resources and distributes decoding tasks efficiently, proving pivotal to Nvidia’s AI ”factory” concept launched last year.

Nvidia Vera CPU designed for agentic AI workloads

The Vera CPU marks Nvidia’s ambitious venture into agentic workloads-AI scenarios requiring rapid single-thread performance combined with high memory bandwidth and energy efficiency. The dual-socket Vera server module runs diverse workloads including Python interpreters during training loops, SQL queries, and HPC tasks. It is not designed as a general-purpose x86 replacement, rather as a specialized CPU to accelerate AI model training and deployment phases where CPUs orchestrate code snippets, score outputs, and handle procedural logic in parallel with GPUs.

While Vera’s high core count and interface to GPUs enable tight integration in AI workflows, Nvidia does not aim to replace x86 CPUs broadly; the focus remains workload-specific. System partners like Dell and HP receive Vera reference modules to build integrated AI servers but could technically create alternative systems, though Nvidia expects them to follow recommended agentic AI use cases for maximum benefit.

Groq LPUs and Vera Rubin GPUs optimize AI token generation

Regarding Nvidia’s collaboration with Intel on NVLink Fusion, Buck confirmed ongoing progress in integrating an IP block and chiplet to enable high-bandwidth connections between Intel CPUs and Nvidia GPUs. Integration efforts are complex and silicon-level, with manufacturing and IP deployment decisions shared between partners. However, Buck deflected questions about Nvidia IP running on Intel’s process nodes, leaving such strategic decisions to CEO Jensen Huang’s purview.

Nvidia still considers CPX ”a good idea” for future use cases requiring extremely high token throughput at massive scale, such as agent-to-agent AI running trillion-parameter models with extensive KV caches. However, the current economics and technical constraints favor the LPUs paired with Vera Rubin GPUs, which achieve a balance between performance and cost for this year’s deployments. LPUs alone demand many chips due to limited on-chip SRAM, while pairing with GPUs reduces chip count dramatically without sacrificing token generation speed.

Buck detailed how the LPX racks and Vera Rubin GPUs complement each other, with LPUs’ superior memory bandwidth driving mixture-of-experts layers and GPUs executing attention math. This synergy reaches token generation rates exceeding 1,000 tokens per second with feasible rack counts, enabling AI data centers operating at 100 to 1,000 megawatt scales to deploy models economically for real-world workloads.

Future Nvidia hardware roadmap includes NVLink and optical connectivity

On the hardware front, LPX chips do not yet support Nvidia’s proprietary NVLink chip-to-chip interconnect but will add this capability in the next LP40 generation. The roadmap also includes expanding compute precision with FP4 formats and tensor operations akin to Nvidia’s GPUs. Optical connectivity will integrate with Vera’s design, utilizing co-packaged optics to enhance rack-to-rack bandwidth and reduce latency-a crucial factor as AI scales beyond current model sizes.

Buck highlighted innovations in Nvidia’s server architecture, emphasizing copper-based NVLink interconnects within racks for exceptional bandwidth and power efficiency. The company’s plans include scaling GPU counts from 72 per rack today to prototypes supporting 576 GPUs interconnected via dual-layer NVLink switches, with architectures capable of pushing toward 1,152 GPUs, anticipating future AI model demands.

Software optimizations critical to Nvidia’s AI performance gains

Software remains a critical competitive advantage; Buck disclosed efforts optimizing AI models like DeepSeek through extensive GPU-hour simulations and software kernel tuning. These optimizations provided up to 4x speed improvements on existing GPUs without new hardware. The investment in software underscores Nvidia’s belief that chip speed alone doesn’t guarantee AI performance; system-wide orchestration and software innovation are equally important to unlock true gains.