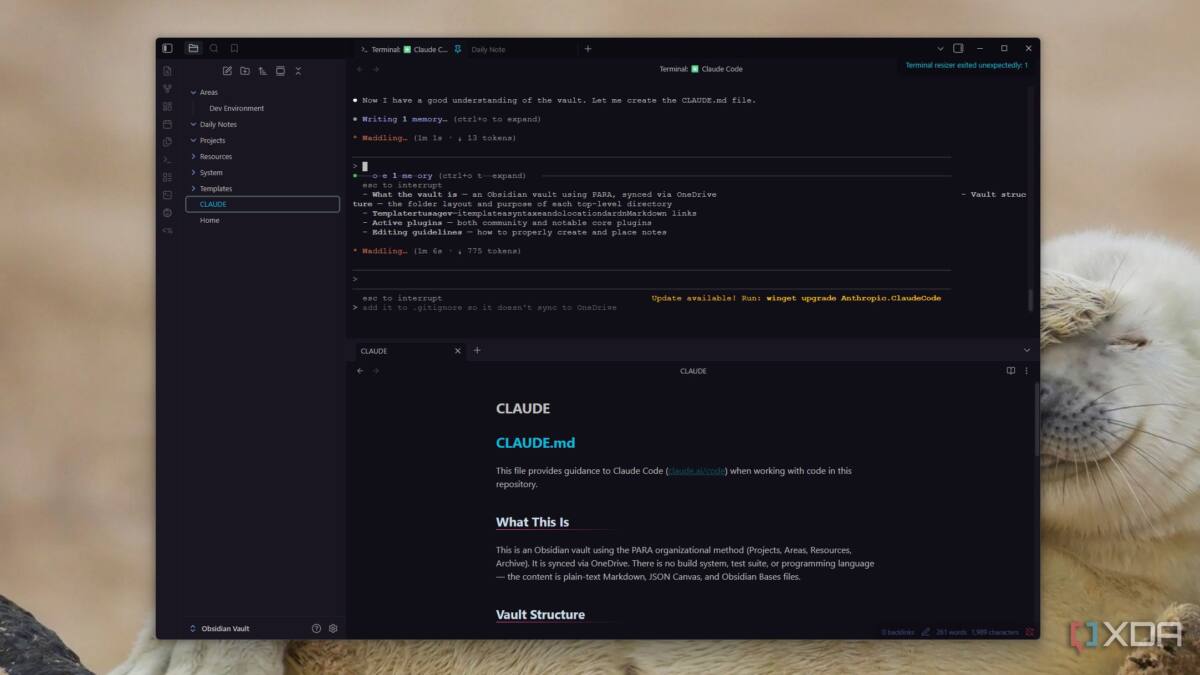

Using AI for software development was expected to be easier with AGENTS.md and CLAUDE.md files providing context. These human-readable guides were introduced to help large language models (LLMs) like Claude understand a project’s workflow and domain knowledge without constant human hand-holding. However, recent research and real-world experience suggest these context files now offer minimal improvement, and their overhead might be hindering progress.

AGENTS.md emerged in early 2025 as an open standard for documenting repository-specific development workflows. Designed by OpenAI and now maintained by the Agentic AI Foundation, this format mimics onboarding documentation for junior developers, listing environment tips, testing instructions, and PR guidelines in plain language. For example, it includes commands for jumping to packages or running tests, along with best-practice suggestions on code modifications.

This approach made sense when AI coding agents had tight context windows and lacked awareness of project structure, allowing them to parse instructions like human developers-boosting output quality by up to 36% in earlier studies. But AI tools have advanced rapidly since then. New research conducted in 2026 by ETH Zurich shows these context files now improve results by only around 5% or less and sometimes even degrade output quality, particularly when the files are auto-generated by AI itself.

One key issue is that AGENTS.md and CLAUDE.md files often contain high-level overviews that modern LLMs can infer directly from the codebase. Meanwhile, these context files increase the reasoning tokens consumed per query by as much as 20%, inflating API costs and slowing workflows for minimal benefit. The time invested in creating and maintaining these readme-style guides is increasingly questionable.

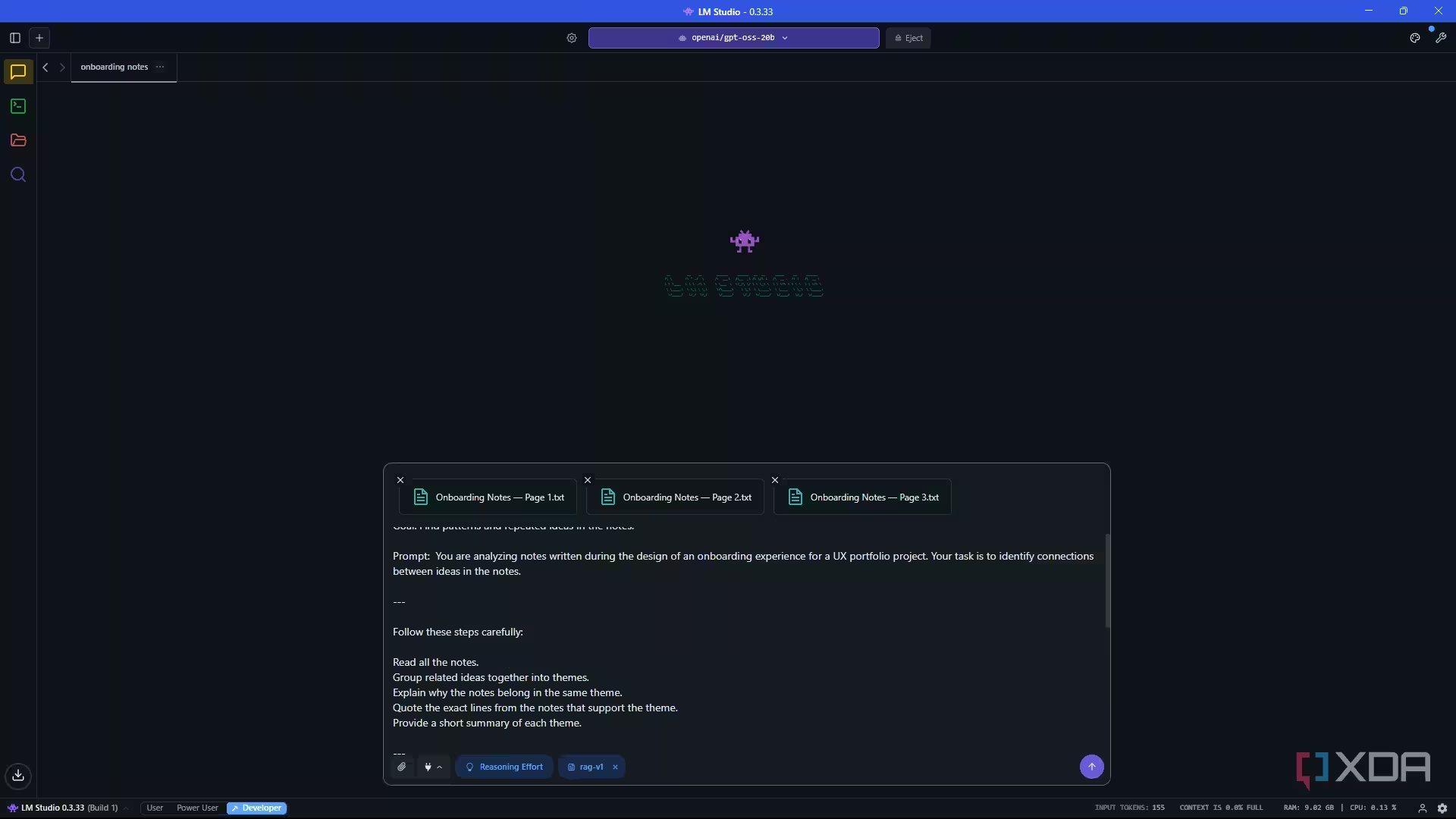

Rather than packing all context upfront, the latest best practice favors leaner, more explicit prompts combined with dynamic retrieval of relevant information. Instead of a monolithic AGENTS.md file, a Model Context Protocol (MCP) server or database can supply targeted snippets of knowledge as needed, reducing token waste and focusing AI reasoning where it truly counts. Tightening the prompt structure with directions like ”respond with (1) analysis, (2) patch, (3) tests to update” guides models to deliver actionable output without unnecessary verbosity.

Claude’s team has adopted a similar mindset with ”Claude Skills,” which break down repetitive agent tasks into modular units rather than loading massive files per repository. This approach exemplifies a shift in how agentic coding tools are evolving beyond the initial generation of context helpers. The industry is moving toward smarter prompting techniques and retrieval augmentation instead of bloated instruction files.

In essence, the early era of AGENTS.md was a practical experiment aimed to compensate for crude LLMs and token limits. Now that those boundaries have lifted-mainly through larger models and improved retrieval systems-forcing agents to parse thick contextual documents is outdated. Focus should instead be on mastering precise prompting, modular context sharing, and live knowledge calls.

Will the Model Context Protocol spark a new wave of tooling, or will developers build entirely fresh workflows for AI augmentation? What’s clear is that AI-assisted development is moving away from static, repository-wide manuals toward more flexible, dynamic solutions optimized for today’s powerful but costly language models.