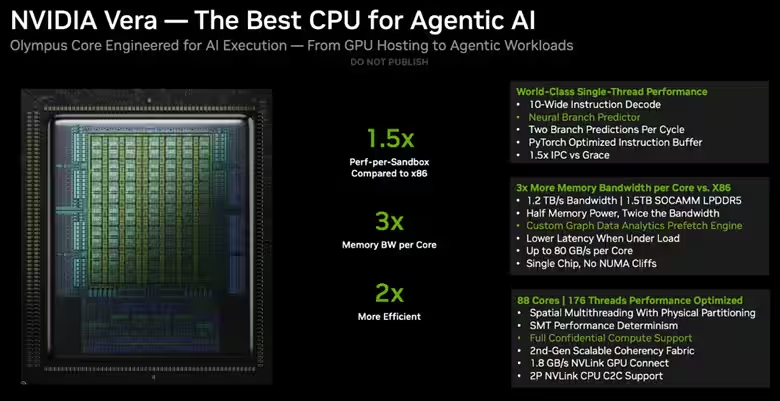

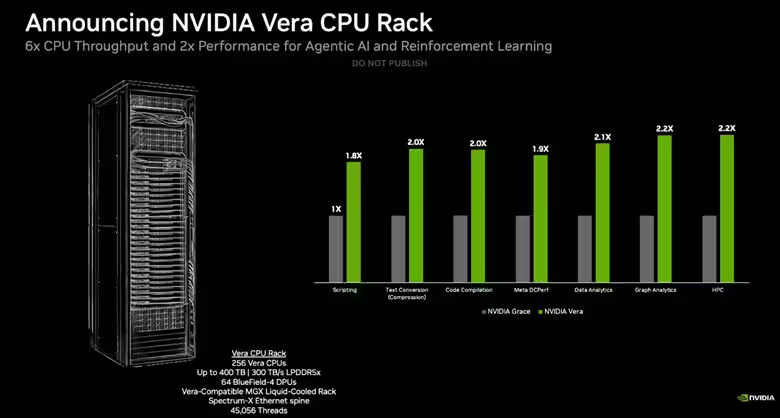

Nvidia has unveiled its 88-core Vera data center CPUs, marking a bold move into traditional CPU markets long dominated by AMD and Intel. Revealed at GTC 2026 in San Jose, these new processors promise up to 50% better performance over typical CPUs, thanks to a 1.5x boost in instructions per cycle (IPC) driven by custom Olympus cores and an innovative high-bandwidth design targeting agentic AI workloads. Complementing the CPUs is a new Vera CPU Rack that integrates 256 liquid-cooled chips to deliver six times the throughput and double the performance in AI tasks, setting the stage for Nvidia to challenge established players and custom Arm chip makers serving hyperscalers.

Nvidia designed the 88-core Vera CPU with 144 threads, pushing both core count and performance per core beyond its previous Grace processors’ capabilities. The new Olympus cores borrow from Arm v9.2-A technology, incorporating spatial multi-threading rather than traditional time-sliced simultaneous multi-threading seen in most CPUs. This innovation allows two threads to run simultaneously within one core by physically isolating pipeline resources, which significantly improves throughput and performance predictability-crucial benefits for data centers with heavy multi-tenant workloads.

Vera’s architecture places all cores in a single coherent domain, avoiding the latency issues common with NUMA setups in x86 high-core-count chips. This is enabled by an upgraded Nvidia Scalable Coherency Fabric mesh network, likely based on Arm’s latest Neoverse CMN S3 design, doubling memory bandwidth to 1.2 TB/s from the previous 546 GB/s in Grace. The design prioritizes bandwidth and latency, achieving up to 80 GB/s per core under uneven load-a boon for AI and analytic applications.

- 88 cores and 144 threads

- 1.5x IPC improvement over predecessors

- Up to 1.2 TB/s memory bandwidth using 1.5 TB of LPDDR5

- Custom Olympus Arm v9.2-A cores with spatial multi-threading

- NVLink-C2C die-to-die interface with 1.8 TB/s bandwidth

- Supports PCIe 6.0, CXL 3.1, and Confidential Computing

The Vera CPU Rack incorporates 256 Vera CPUs cooled by liquid, alongside 74 Bluefield-4 DPUs and ConnectX SuperNIC networking, combining to 45,056 threads and up to 400 TB of shared LPDDR5 memory with a blistering 300 TB/s throughput. Nvidia claims this configuration can support 22,500 independent CPU environments simultaneously. The company demonstrated 1.8x to 2.2x legacy workload improvements on tasks from scripting and compilation to data analytics when compared to last-generation Grace chips.

This announcement builds on Nvidia’s recent close collaboration with Meta, which plans to deploy these CPU-only systems broadly. Vera racks are also set to reach hyperscalers like Oracle, Alibaba, and cloud providers CoreWeave and Nebius. Multiple OEMs including Dell, HPE, Lenovo, and Supermicro will produce single- and dual-socket systems featuring Vera CPUs, expanding the market reach.

Beyond standalone CPU use, Vera chips integrate into Nvidia’s larger Vera Rubin platform, combining CPUs with GPUs, networking switches, DPUs, and data processing units to power next-generation AI supercomputers. The 88-core Vera chips are already in full production with deliveries expected in this year’s second half, on track to challenge x86 incumbents and custom Arm solutions in the data center CPU market hungry for specialization and efficiency.