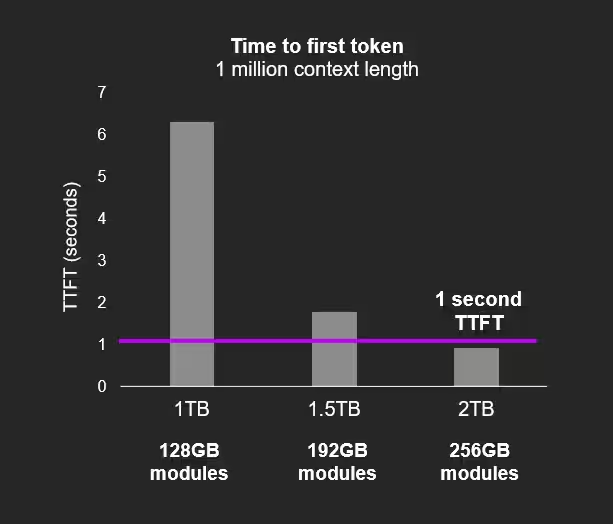

Micron has begun sampling its latest 256GB SOCAMM2 memory modules to customers, marking a significant leap in memory density and efficiency for AI datacenters. These modules expand capacity by a third over the previous 192GB generation and promise about 66% better power efficiency than traditional RDIMM solutions. Such improvements bring the long-anticipated milestone of packing 2TB of RAM into a single CPU’s memory pool closer to reality.

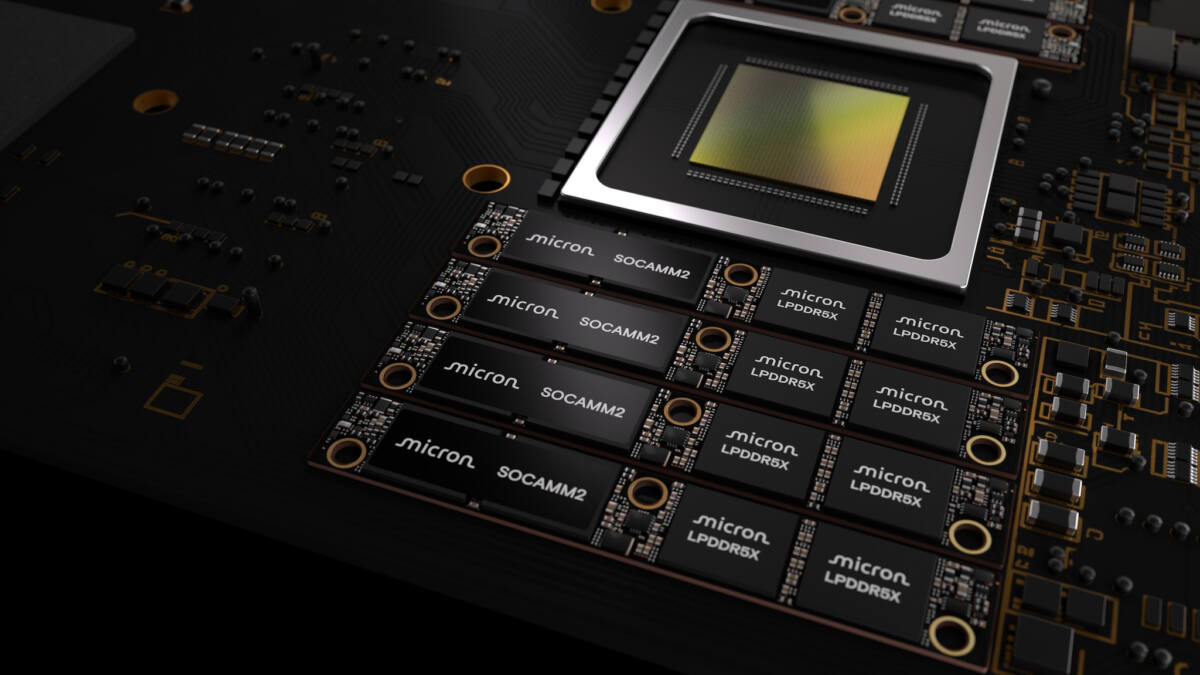

While AI performance discussions often zero in on accelerators and their raw compute speeds, memory capacity and power consumption constitute a critical battleground. The new SOCAMM2 modules leverage Micron’s 32 Gb LPDDR5X monolithic dies, where all memory components and related circuitry are integrated into a single chip-streamlining design and efficiency. This makes them compatible with the liquid-cooling setups increasingly favored in AI server environments to manage heat dissipation.

One direct benefit of having such vast amounts of RAM tightly coupled with processing units is the ability to run AI models with drastically larger context windows. This capability reduces ”Time To First Token” (TTFT), meaning AI responses become available quicker-a critical advantage in real-time applications. As AI workloads grow in scale and complexity, memory proximity and size become as vital as raw computation.

The SOCAMM2 form factor is the product of a collaboration between Nvidia and memory manufacturers including Micron, Samsung, and SK hynix. Nvidia originally developed a bespoke SOCAMM standard, but early versions struggled with overheating on high-density servers. Partnering with experienced memory makers helped refine the design for higher capacity and lower power consumption that can function reliably in demanding, liquid-cooled systems.

This development arrives amid substantial capital expenditure by hyperscalers and AI-focused enterprises seeking to outpace competitors in efficiency and capability. Although headline-grabbing AI chip performance grabs attention, advances like Micron’s memory leap are quietly reshaping the infrastructure. They represent a shift from chasing pure compute to optimizing the entire system stack, with memory acting as a linchpin.

The push for ultra-dense, power-efficient memory is also a response to the limitations of existing server RAM standards, which can become bottlenecks both in speed and thermal management when scaled up. By integrating monolithic LPDDR5X dies in a modular form factor compatible with emerging cooling and server architectures, Micron helps enable the next generation of AI datacenters that demand both scale and stability.

As AI models balloon in size and complexity, memory innovations like these highlight that breakthroughs won’t come from processors alone. The question ahead is whether other memory makers will accelerate efforts to match or surpass Micron’s strides, or if we’ll see more consolidation around SOCAMM2 as a new standard for AI-ready server memory.