OpenAI is quietly fixing a pricing problem it helped create: there’s a huge gap between the $20 ChatGPT Plus plan and the $200 Pro tier, and some of its most active users have nowhere obvious to land.

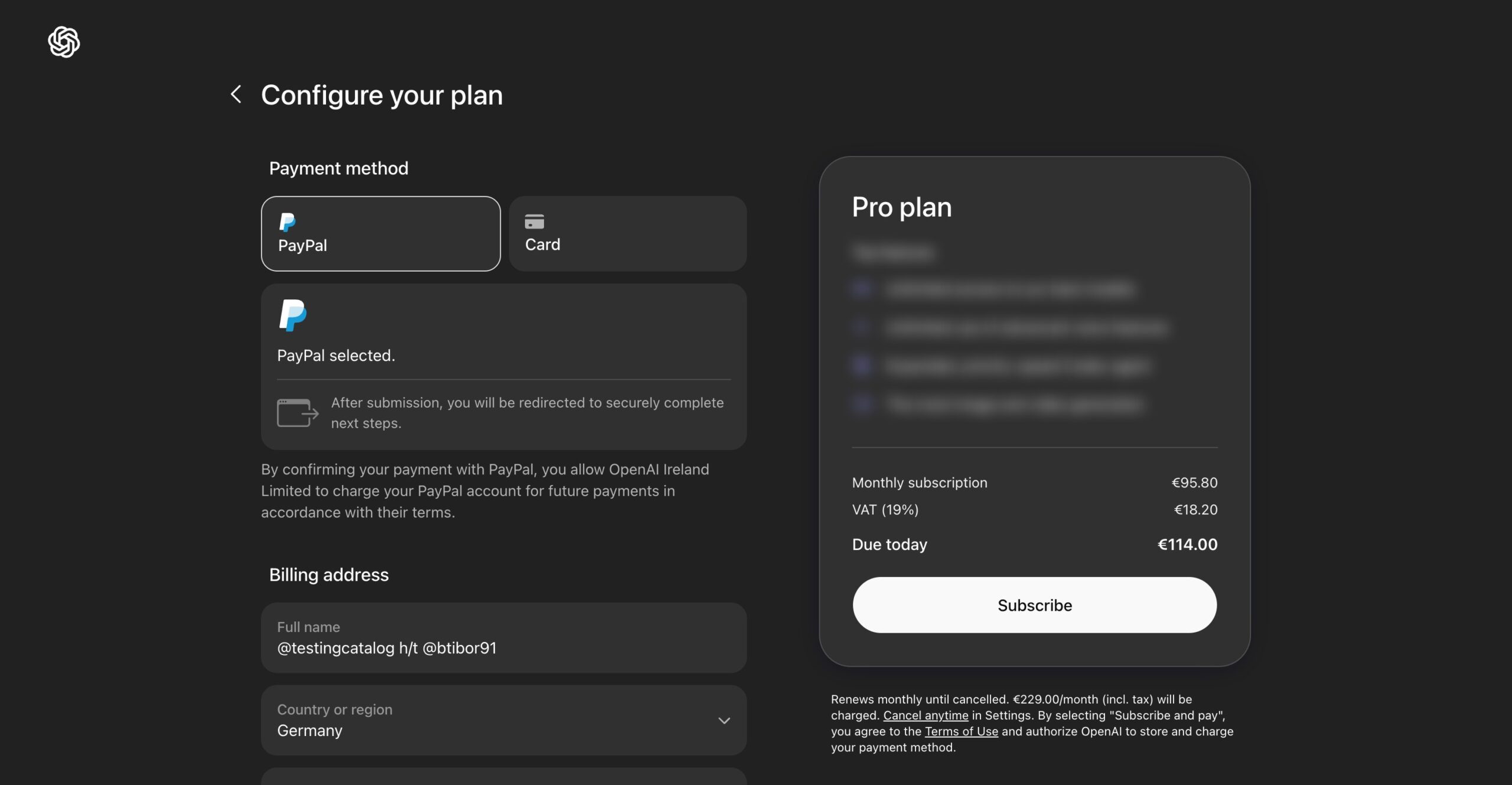

That gap is where a rumored ChatGPT ”Pro Lite” at $100 per month would sit. Developer Tibor Blaho discovered references to the plan in the ChatGPT web app’s frontend code, a find that suggests OpenAI is testing or preparing a mid-tier offering. OpenAI has not confirmed the plan or given a timetable for launch.

Why the middle tier matters

Right now OpenAI’s consumer lineup reads: Free, Go ($8/month), Plus ($20/month), Pro ($200/month), plus Team, Business, and Enterprise options. For many freelancers, indie developers and researchers, jumping from $20 to $200 is too steep; they need more usage and features than Plus but not the full Pro package.

The leaked code suggests Pro Lite would sit between those two tiers – offering more advanced features than Plus but fewer than Pro. It also hints at three to five times the deep reasoning model usage quota compared with Plus, and potential accommodation for Codex usage.

This is about economics as much as convenience

OpenAI’s pricing moves happen against a brutal financial backdrop: the company reportedly pulled in $20 billion in revenue but faces a projected $14 billion annual loss driven by rising compute bills, and has been described as seeking a roughly $100 billion funding lifeline. A $100 tier is an obvious lever – it targets higher-value users while promising a quicker route to meaningful monthly revenue than trying to coax everyone up to $200.

There’s also competitive pressure. Rivals have been carving slices of the market via platform integration and enterprise deals, and user dissatisfaction over recent product moves has made loyalty shakier. A mid-tier product is an attempt to staunch churn among power users without undercutting enterprise pricing.

Who wins, who loses

Winners: freelancers, solo developers and researchers who need more tokens or model time than Plus but can’t justify Pro. OpenAI, if enough of those users upgrade, because moving folks from $20 to $100 multiplies per-user revenue without the sales overhead of enterprise deals.

Losers: potential Pro subscribers who might downgrade to Pro Lite, and rivals that rely on a sparse mid-market. There’s also a subtle risk that OpenAI simply shifts usage patterns without reducing compute spend – heavier usage from more $100 customers could keep costs high.

What OpenAI needs to get right

First, clarify caps and performance. Mid-tier buyers care about predictable throughput and model access; vague promises of ”more” than Plus won’t convert many. Second, protect against cannibalization: Pro Lite must be meaningfully different from Pro so teams with expense accounts still see value in the higher tier. Third, pair the pricing change with product controls that actually reduce compute waste – smarter session timeouts, clearer per-request settings, or finer-grained token accounting.

Finally, expect trade-offs. Adding a $100 plan can boost average revenue per user, but it won’t close a multi‑billion-dollar compute gap on its own. OpenAI will still need either cheaper compute, enterprise wins, or structural changes to how models are served to materially change its bottom line.

What happens next

The public clues are thin – a frontend code discovery and a lot of context about why a mid-tier makes sense. If Pro Lite appears, watch the fine print: quotas, model access, and any new tooling for cost control. Those details determine whether this is a thoughtful new product or just a price shuffle that delays tougher decisions.

For now, consider Pro Lite a pragmatic move: not an act of generosity, but a targeted attempt to monetize the people who use ChatGPT the most – and to buy OpenAI more runway while it figures out the longer-term economics of running giant models.