Nvidia says it is using artificial intelligence to chew through parts of chip design that used to soak up months of engineering time, and in at least one case the company claims an AI tool now finishes overnight what once took a team of eight people about 10 months. That is less ”future of work” than ”your spreadsheets are on borrowed time”.

The company’s top scientist, Bill Dally, described the shift during a conversation at GTC with Google chief scientist Jeff Dean. The punchline is straightforward: Nvidia is not handing chip design over to robots, but it is pushing AI into more of the boring, repetitive, and error-prone parts of the pipeline, where speed matters and human patience tends to leak out first.

NB-Cell cuts library porting from months to one night

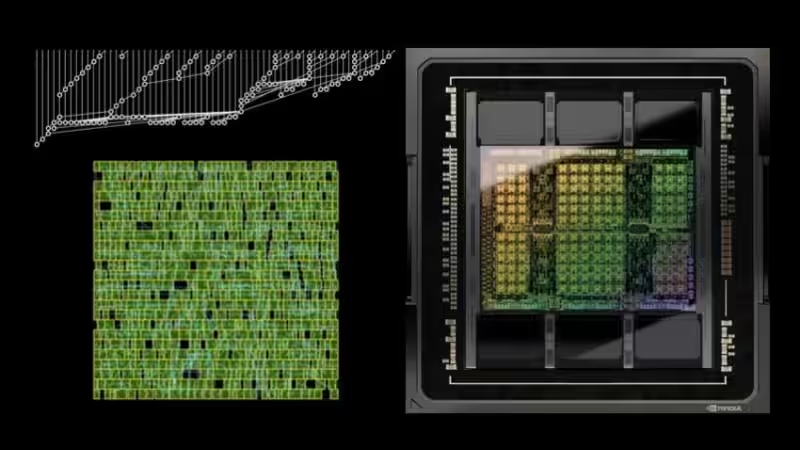

The clearest example is NB-Cell, Nvidia’s reinforcement-learning tool for moving its standard cell library to a new semiconductor process. Dally said the library contains roughly 2,500 to 3,000 cells, and porting it once required eight engineers working for about 10 months, or 80 person-months in total.

Now, Nvidia says the job can be done in one night on a single GPU. The output is not a toy result, either: the generated cells are said to match human designs on size, power consumption, and delay, or beat them. That matters because standard-cell migration is one of those unglamorous but essential jobs that can slow down every new process node, especially as chip design gets more expensive and process shrinks get harder.

- Old method: 8 engineers, about 10 months

- New method: one night on one GPU

- Goal: move standard cell libraries to a new process faster

- Claimed result: equal or better size, power, and delay

Prefix RL targets a problem humans already know too well

Dally also pointed to Prefix RL, a separate internal tool aimed at the classic placement problem for lookahead elements in carry chains. Nvidia says the system can produce layouts that a person would not think of, while improving key metrics by about 20% to 30% compared with human-made solutions. That is a neat reminder that AI in chip design is not just about speed; it can also explore design space in ways that conventional engineering habits tend to miss.

That said, nobody should confuse this with fully automated chip creation. Dally was explicit that Nvidia is still far from letting AI run the entire design flow unsupervised. The real story is narrower and more practical: AI is becoming a force multiplier inside chip companies, helping them move faster on the tedious work and occasionally finding better answers than the people who trained it.

Chip Nemo and Bug Nemo turn internal knowledge into a shortcut

Beyond design optimization, Nvidia is also using internal language models called Chip Nemo and Bug Nemo. They are trained and updated on the company’s own material, including RTL code and years of GPU architecture documents, which gives them a very specific advantage over generic chatbots that know a lot about everything and little about your actual codebase.

The practical upside is simple. Junior engineers can ask the model instead of repeatedly pinging senior colleagues, and bug reports can be summarized and routed to the right module or engineer faster. Large chipmakers have been moving in this direction for a while, but Nvidia is now describing a more mature in-house stack, not a side project with a demo attached.

The open question is how quickly rivals can copy this without Nvidia’s scale of proprietary design data. If the company really has turned AI into an overnight accelerator for process migration and layout work, competitors will feel pressure to build the same thing – because nobody wants to spend 10 months doing a job a GPU can finish before sunrise.